fitclinear

Fit binary linear classifier to high-dimensional data

Syntax

Description

fitclinear trains linear classification models for two-class (binary) learning with high-dimensional, full or sparse predictor data. Available linear classification models include regularized support vector machines (SVM) and logistic regression models. fitclinear minimizes the objective function using techniques that reduce computing time (e.g., stochastic gradient descent).

For reduced computation time on a high-dimensional data set that includes many predictor variables, train a linear classification model by using fitclinear. For low- through medium-dimensional predictor data sets, see Alternatives for Lower-Dimensional Data.

To train a linear classification model for multiclass learning by combining SVM or logistic regression binary classifiers using error-correcting output codes, see fitcecoc.

Mdl = fitclinear(Tbl,ResponseVarName)Tbl and the class labels in

Tbl.ResponseVarName.

Mdl = fitclinear(X,Y,Name,Value)'Kfold' name-value pair argument.

The cross-validation results determine how well the model generalizes.

[

also returns the hyperparameter optimization results when you specify

Mdl,FitInfo,HyperparameterOptimizationResults] = fitclinear(___)OptimizeHyperparameters.

[

also returns Mdl,FitInfo,AggregateOptimizationResults] = fitclinear(___)AggregateOptimizationResults, which contains

hyperparameter optimization results when you specify the

OptimizeHyperparameters and

HyperparameterOptimizationOptions name-value arguments.

You must also specify the ConstraintType and

ConstraintBounds options of

HyperparameterOptimizationOptions. You can use this

syntax to optimize on compact model size instead of cross-validation loss, and

to perform a set of multiple optimization problems that have the same options

but different constraint bounds.

Examples

Train a binary, linear classification model using support vector machines, dual SGD, and ridge regularization.

Load the NLP data set.

load nlpdataX is a sparse matrix of predictor data, and Y is a categorical vector of class labels. There are more than two classes in the data.

Identify the labels that correspond to the Statistics and Machine Learning Toolbox™ documentation web pages.

Ystats = Y == 'stats';Train a binary, linear classification model that can identify whether the word counts in a documentation web page are from the Statistics and Machine Learning Toolbox™ documentation. Train the model using the entire data set. Determine how well the optimization algorithm fit the model to the data by extracting a fit summary.

rng(1); % For reproducibility

[Mdl,FitInfo] = fitclinear(X,Ystats)Mdl =

ClassificationLinear

ResponseName: 'Y'

ClassNames: [0 1]

ScoreTransform: 'none'

Beta: [34023×1 double]

Bias: -1.0059

Lambda: 3.1674e-05

Learner: 'svm'

Properties, Methods

FitInfo = struct with fields:

Lambda: 3.1674e-05

Objective: 5.3783e-04

PassLimit: 10

NumPasses: 10

BatchLimit: []

NumIterations: 238561

GradientNorm: NaN

GradientTolerance: 0

RelativeChangeInBeta: 0.0562

BetaTolerance: 1.0000e-04

DeltaGradient: 1.4582

DeltaGradientTolerance: 1

TerminationCode: 0

TerminationStatus: {'Iteration limit exceeded.'}

Alpha: [31572×1 double]

History: []

FitTime: 0.0712

Solver: {'dual'}

Mdl is a ClassificationLinear model. You can pass Mdl and the training or new data to loss to inspect the in-sample classification error. Or, you can pass Mdl and new predictor data to predict to predict class labels for new observations.

FitInfo is a structure array containing, among other things, the termination status (TerminationStatus) and how long the solver took to fit the model to the data (FitTime). It is good practice to use FitInfo to determine whether optimization-termination measurements are satisfactory. Because training time is small, you can try to retrain the model, but increase the number of passes through the data. This can improve measures like DeltaGradient.

To determine a good lasso-penalty strength for a linear classification model that uses a logistic regression learner, implement 5-fold cross-validation.

Load the NLP data set.

load nlpdataX is a sparse matrix of predictor data, and Y is a categorical vector of class labels. There are more than two classes in the data.

The models should identify whether the word counts in a web page are from the Statistics and Machine Learning Toolbox™ documentation. So, identify the labels that correspond to the Statistics and Machine Learning Toolbox™ documentation web pages.

Ystats = Y == 'stats';Create a set of 11 logarithmically-spaced regularization strengths from through .

Lambda = logspace(-6,-0.5,11);

Cross-validate the models. To increase execution speed, transpose the predictor data and specify that the observations are in columns. Estimate the coefficients using SpaRSA. Lower the tolerance on the gradient of the objective function to 1e-8.

X = X'; rng(10); % For reproducibility CVMdl = fitclinear(X,Ystats,'ObservationsIn','columns','KFold',5,... 'Learner','logistic','Solver','sparsa','Regularization','lasso',... 'Lambda',Lambda,'GradientTolerance',1e-8)

CVMdl =

ClassificationPartitionedLinear

CrossValidatedModel: 'Linear'

ResponseName: 'Y'

NumObservations: 31572

KFold: 5

Partition: [1×1 cvpartition]

ClassNames: [0 1]

ScoreTransform: 'none'

Properties, Methods

numCLModels = numel(CVMdl.Trained)

numCLModels = 5

CVMdl is a ClassificationPartitionedLinear model. Because fitclinear implements 5-fold cross-validation, CVMdl contains 5 ClassificationLinear models that the software trains on each fold.

Display the first trained linear classification model.

Mdl1 = CVMdl.Trained{1}Mdl1 =

ClassificationLinear

ResponseName: 'Y'

ClassNames: [0 1]

ScoreTransform: 'logit'

Beta: [34023×11 double]

Bias: [-13.2949 -13.2950 -13.2950 -13.2950 -9.4521 -7.1038 -5.4345 -4.6431 -3.6889 -3.1589 -2.9793]

Lambda: [1.0000e-06 3.5481e-06 1.2589e-05 4.4668e-05 1.5849e-04 5.6234e-04 0.0020 0.0071 0.0251 0.0891 0.3162]

Learner: 'logistic'

Properties, Methods

Mdl1 is a ClassificationLinear model object. fitclinear constructed Mdl1 by training on the first four folds. Because Lambda is a sequence of regularization strengths, you can think of Mdl1 as 11 models, one for each regularization strength in Lambda.

Estimate the cross-validated classification error.

ce = kfoldLoss(CVMdl);

Because there are 11 regularization strengths, ce is a 1-by-11 vector of classification error rates.

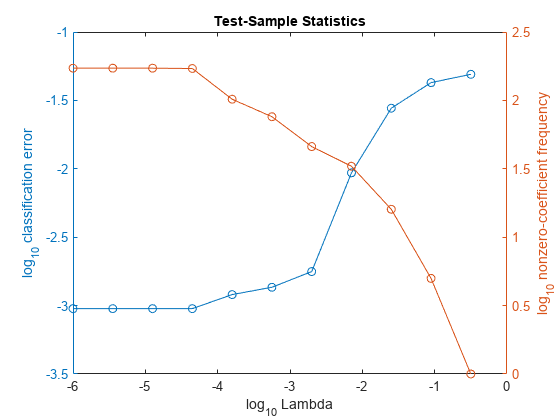

Higher values of Lambda lead to predictor variable sparsity, which is a good quality of a classifier. For each regularization strength, train a linear classification model using the entire data set and the same options as when you cross-validated the models. Determine the number of nonzero coefficients per model.

Mdl = fitclinear(X,Ystats,'ObservationsIn','columns',... 'Learner','logistic','Solver','sparsa','Regularization','lasso',... 'Lambda',Lambda,'GradientTolerance',1e-8); numNZCoeff = sum(Mdl.Beta~=0);

In the same figure, plot the cross-validated, classification error rates and frequency of nonzero coefficients for each regularization strength. Plot all variables on the log scale.

figure; [h,hL1,hL2] = plotyy(log10(Lambda),log10(ce),... log10(Lambda),log10(numNZCoeff)); hL1.Marker = 'o'; hL2.Marker = 'o'; ylabel(h(1),'log_{10} classification error') ylabel(h(2),'log_{10} nonzero-coefficient frequency') xlabel('log_{10} Lambda') title('Test-Sample Statistics') hold off

Choose the index of the regularization strength that balances predictor variable sparsity and low classification error. In this case, a value between to should suffice.

idxFinal = 7;

Select the model from Mdl with the chosen regularization strength.

MdlFinal = selectModels(Mdl,idxFinal);

MdlFinal is a ClassificationLinear model containing one regularization strength. To estimate labels for new observations, pass MdlFinal and the new data to predict.

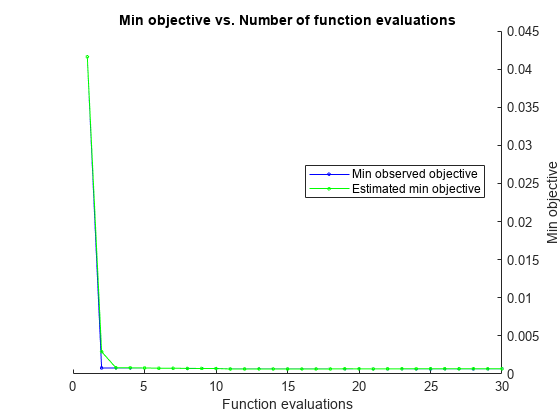

This example shows how to minimize the cross-validation error in a linear classifier using fitclinear. The example uses the NLP data set.

Load the NLP data set.

load nlpdataX is a sparse matrix of predictor data, and Y is a categorical vector of class labels. There are more than two classes in the data.

The models should identify whether the word counts in a web page are from the Statistics and Machine Learning Toolbox™ documentation. Identify the relevant labels.

X = X';

Ystats = Y == 'stats';Optimize the classification using the 'auto' parameters.

For reproducibility, set the random seed and use the 'expected-improvement-plus' acquisition function.

rng default Mdl = fitclinear(X,Ystats,'ObservationsIn','columns','Solver','sparsa',... 'OptimizeHyperparameters','auto','HyperparameterOptimizationOptions',... struct('AcquisitionFunctionName','expected-improvement-plus'))

|=====================================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | Lambda | Learner |

| | result | | runtime | (observed) | (estim.) | | |

|=====================================================================================================|

| 1 | Best | 0.041619 | 4.6869 | 0.041619 | 0.041619 | 0.077903 | logistic |

| 2 | Best | 0.00072849 | 4.9582 | 0.00072849 | 0.0028767 | 2.1405e-09 | logistic |

| 3 | Accept | 0.049221 | 5.6865 | 0.00072849 | 0.00075737 | 0.72101 | svm |

| 4 | Accept | 0.00079184 | 5.2474 | 0.00072849 | 0.00074989 | 3.4734e-07 | svm |

| 5 | Accept | 0.00079184 | 4.9249 | 0.00072849 | 0.0007292 | 1.1738e-08 | logistic |

| 6 | Accept | 0.00085519 | 5.2196 | 0.00072849 | 0.00072741 | 2.473e-09 | svm |

| 7 | Accept | 0.00079184 | 5.0766 | 0.00072849 | 0.00072511 | 3.1854e-08 | svm |

| 8 | Accept | 0.00088686 | 5.2478 | 0.00072849 | 0.00072227 | 3.1717e-10 | svm |

| 9 | Accept | 0.00076017 | 4.7641 | 0.00072849 | 0.00068193 | 3.1837e-10 | logistic |

| 10 | Accept | 0.00079184 | 5.4026 | 0.00072849 | 0.00072873 | 1.1258e-07 | svm |

| 11 | Accept | 0.00072849 | 4.6544 | 0.00072849 | 0.00070379 | 2.5414e-09 | logistic |

| 12 | Accept | 0.00076017 | 7.9971 | 0.00072849 | 0.00074726 | 2.1518e-07 | logistic |

| 13 | Best | 0.00069682 | 5.1575 | 0.00069682 | 0.00066872 | 6.3482e-08 | logistic |

| 14 | Accept | 0.00072849 | 5.3838 | 0.00069682 | 0.00069209 | 6.9491e-08 | logistic |

| 15 | Accept | 0.00072849 | 4.8787 | 0.00069682 | 0.00069242 | 8.0597e-10 | logistic |

| 16 | Accept | 0.00069682 | 5.0402 | 0.00069682 | 0.00069336 | 7.5311e-08 | logistic |

| 17 | Best | 0.00066515 | 5.2935 | 0.00066515 | 0.00068727 | 7.8467e-08 | logistic |

| 18 | Accept | 0.0012353 | 6.1672 | 0.00066515 | 0.00068961 | 0.00083275 | svm |

| 19 | Accept | 0.00076017 | 5.7524 | 0.00066515 | 0.00068973 | 5.0781e-05 | svm |

| 20 | Accept | 0.00085519 | 4.2822 | 0.00066515 | 0.00069004 | 0.00022104 | svm |

|=====================================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | Lambda | Learner |

| | result | | runtime | (observed) | (estim.) | | |

|=====================================================================================================|

| 21 | Accept | 0.00082351 | 8.0975 | 0.00066515 | 0.00069045 | 4.5396e-06 | svm |

| 22 | Accept | 0.0010136 | 22.105 | 0.00066515 | 0.00070695 | 4.5995e-06 | logistic |

| 23 | Accept | 0.00095021 | 22.792 | 0.00066515 | 0.00070002 | 1.1742e-06 | logistic |

| 24 | Accept | 0.00085519 | 6.9897 | 0.00066515 | 0.00070026 | 1.6481e-05 | svm |

| 25 | Accept | 0.00085519 | 6.0904 | 0.00066515 | 0.00070039 | 1.1552e-06 | svm |

| 26 | Accept | 0.00079184 | 4.4682 | 0.00066515 | 0.00070057 | 1.0115e-08 | svm |

| 27 | Accept | 0.00076017 | 4.9767 | 0.00066515 | 0.00070063 | 8.2618e-10 | logistic |

| 28 | Accept | 0.00072849 | 4.8225 | 0.00066515 | 0.00070162 | 3.6577e-08 | logistic |

| 29 | Accept | 0.00082351 | 5.5341 | 0.00066515 | 0.00071921 | 9.6374e-08 | logistic |

| 30 | Accept | 0.00076017 | 4.7031 | 0.00066515 | 0.00071914 | 3.1846e-10 | logistic |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 210.5367 seconds

Total objective function evaluation time: 196.4015

Best observed feasible point:

Lambda Learner

__________ ________

7.8467e-08 logistic

Observed objective function value = 0.00066515

Estimated objective function value = 0.00072227

Function evaluation time = 5.2935

Best estimated feasible point (according to models):

Lambda Learner

__________ ________

6.9491e-08 logistic

Estimated objective function value = 0.00071914

Estimated function evaluation time = 5.1761

Mdl =

ClassificationLinear

ResponseName: 'Y'

ClassNames: [0 1]

ScoreTransform: 'logit'

Beta: [34023×1 double]

Bias: -10.0364

Lambda: 6.9491e-08

Learner: 'logistic'

Properties, Methods

Input Arguments

Predictor data, specified as an n-by-p full or sparse matrix.

The length of Y and the number of observations

in X must be equal.

Note

If you orient your predictor matrix so that observations correspond to columns and

specify 'ObservationsIn','columns', then you might experience a

significant reduction in optimization execution time.

Data Types: single | double

Class labels to which the classification model is trained, specified as a categorical, character, or string array, logical or numeric vector, or cell array of character vectors.

fitclinearsupports only binary classification. EitherYmust contain exactly two distinct classes, or you must specify two classes for training by using the'ClassNames'name-value pair argument. For multiclass learning, seefitcecoc.The length of

Ymust be equal to the number of observations inXorTbl.If

Yis a character array, then each label must correspond to one row of the array.A good practice is to specify the class order using the

ClassNamesname-value pair argument.

Data Types: char | string | cell | categorical | logical | single | double

Sample data used to train the model, specified as a table. Each row of Tbl corresponds to one observation, and each column corresponds to one predictor variable. Multicolumn variables and cell arrays other than cell arrays of character vectors are not allowed.

Optionally, Tbl can contain a column for the response variable and a column for the observation weights.

The response variable must be a categorical, character, or string array, a logical or numeric vector, or a cell array of character vectors.

fitclinearsupports only binary classification. Either the response variable must contain exactly two distinct classes, or you must specify two classes for training by using theClassNamesname-value argument. For multiclass learning, seefitcecoc.A good practice is to specify the order of the classes in the response variable by using the

ClassNamesname-value argument.

The column for the weights must be a numeric vector.

You must specify the response variable in

Tblby usingResponseVarNameorformulaand specify the observation weights inTblby usingWeights.Specify the response variable by using

ResponseVarName—fitclinearuses the remaining variables as predictors. To use a subset of the remaining variables inTblas predictors, specify predictor variables by usingPredictorNames.Define a model specification by using

formula—fitclinearuses a subset of the variables inTblas predictor variables and the response variable, as specified informula.

If Tbl does not contain the response variable, then specify a response variable by using Y. The length of the response variable Y and the number of rows in Tbl must be equal. To use a subset of the variables in Tbl as predictors, specify predictor variables by using PredictorNames.

Data Types: table

Response variable name, specified as the name of a variable in

Tbl.

You must specify ResponseVarName as a character vector or string scalar.

For example, if the response variable Y is

stored as Tbl.Y, then specify it as

"Y". Otherwise, the software

treats all columns of Tbl, including

Y, as predictors when training

the model.

The response variable must be a categorical, character, or string array; a logical or numeric

vector; or a cell array of character vectors. If

Y is a character array, then each

element of the response variable must correspond to one row of

the array.

A good practice is to specify the order of the classes by using the

ClassNames name-value

argument.

Data Types: char | string

Explanatory model of the response variable and a subset of the predictor variables,

specified as a character vector or string scalar in the form

"Y~x1+x2+x3". In this form, Y represents the

response variable, and x1, x2, and

x3 represent the predictor variables.

To specify a subset of variables in Tbl as predictors for

training the model, use a formula. If you specify a formula, then the software does not

use any variables in Tbl that do not appear in

formula.

The variable names in the formula must be both variable names in Tbl

(Tbl.Properties.VariableNames) and valid MATLAB® identifiers. You can verify the variable names in Tbl by

using the isvarname function. If the variable names

are not valid, then you can convert them by using the matlab.lang.makeValidName function.

Data Types: char | string

Note

The software treats NaN, empty character vector

(''), empty string (""),

<missing>, and <undefined>

elements as missing values, and removes observations with any of these characteristics:

Missing value in the response variable (for example,

YorValidationData{2})At least one missing value in a predictor observation (for example, row in

XorValidationData{1})NaNvalue or0weight (for example, value inWeightsorValidationData{3})

For memory-usage economy, it is best practice to remove observations containing missing values from your training data manually before training.

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Before R2021a, use commas to separate each name and value, and enclose

Name in quotes.

Example: 'ObservationsIn','columns','Learner','logistic','CrossVal','on' specifies that the columns of the predictor matrix corresponds to observations, to implement logistic regression, to implement 10-fold cross-validation.

Note

You cannot use any cross-validation name-value argument together with the

OptimizeHyperparameters name-value argument. You can modify the

cross-validation for OptimizeHyperparameters only by using the

HyperparameterOptimizationOptions name-value argument.

Linear Classification Options

Regularization term strength, specified as the comma-separated pair consisting of 'Lambda' and 'auto', a nonnegative scalar, or a vector of nonnegative values.

For

'auto',Lambda= 1/n.If you specify a cross-validation, name-value pair argument (e.g.,

CrossVal), then n is the number of in-fold observations.Otherwise, n is the training sample size.

For a vector of nonnegative values,

fitclinearsequentially optimizes the objective function for each distinct value inLambdain ascending order.If

Solveris'sgd'or'asgd'andRegularizationis'lasso',fitclineardoes not use the previous coefficient estimates as a warm start for the next optimization iteration. Otherwise,fitclinearuses warm starts.If

Regularizationis'lasso', then any coefficient estimate of 0 retains its value whenfitclinearoptimizes using subsequent values inLambda.fitclinearreturns coefficient estimates for each specified regularization strength.

Example: 'Lambda',10.^(-(10:-2:2))

Data Types: char | string | double | single

Linear classification model type, specified as the comma-separated

pair consisting of 'Learner' and 'svm' or 'logistic'.

In this table,

β is a vector of p coefficients.

x is an observation from p predictor variables.

b is the scalar bias.

| Value | Algorithm | Response Range | Loss Function |

|---|---|---|---|

'svm' | Support vector machine | y ∊ {–1,1}; 1 for the positive class and –1 otherwise | Hinge: |

'logistic' | Logistic regression | Same as 'svm' | Deviance (logistic): |

Example: 'Learner','logistic'

Predictor data observation dimension, specified as "rows" or

"columns".

Note

If you orient your predictor matrix so that observations correspond to columns and

specify ObservationsIn="columns", then you might experience a

significant reduction in computation time. You cannot specify

ObservationsIn="columns" for predictor data in a

table.

Example: ObservationsIn="columns"

Data Types: char | string

Complexity penalty type, specified as the comma-separated pair

consisting of 'Regularization' and 'lasso' or 'ridge'.

The software composes the objective function for minimization

from the sum of the average loss function (see Learner)

and the regularization term in this table.

| Value | Description |

|---|---|

'lasso' | Lasso (L1) penalty: |

'ridge' | Ridge (L2) penalty: |

To specify the regularization term strength, which is λ in

the expressions, use Lambda.

The software excludes the bias term (β0) from the regularization penalty.

If Solver is 'sparsa',

then the default value of Regularization is 'lasso'.

Otherwise, the default is 'ridge'.

Tip

For predictor variable selection, specify

'lasso'. For more on variable selection, see Introduction to Feature Selection.For optimization accuracy, specify

'ridge'.

Example: 'Regularization','lasso'

Objective function minimization technique, specified as the

comma-separated pair consisting of 'Solver' and a

character vector or string scalar, a string array, or a cell array of

character vectors with values from this table.

| Value | Description | Restrictions |

|---|---|---|

'sgd' | Stochastic gradient descent (SGD) [4][2] | |

'asgd' | Average stochastic gradient descent (ASGD) [7] | |

'dual' | Dual SGD for SVM [1][6] | Regularization must be 'ridge' and Learner must be 'svm'. |

'bfgs' | Broyden-Fletcher-Goldfarb-Shanno quasi-Newton algorithm (BFGS) [3] | Inefficient if X is very high-dimensional.

Regularization must be

'ridge'. |

'lbfgs' | Limited-memory BFGS (LBFGS) [3] | Regularization must be 'ridge'. |

'sparsa' | Sparse Reconstruction by Separable Approximation (SpaRSA) [5] | Regularization must be 'lasso'. |

If you specify:

A ridge penalty (see

Regularization) andXcontains 100 or fewer predictor variables, then the default solver is'bfgs'.An SVM model (see

Learner), a ridge penalty, andXcontains more than 100 predictor variables, then the default solver is'dual'.A lasso penalty and

Xcontains 100 or fewer predictor variables, then the default solver is'sparsa'.

Otherwise, the default solver is

'sgd'. Note that the default solver can change when

you perform hyperparameter optimization. For more information, see Regularization method determines the solver used during hyperparameter optimization.

If you specify a string array or cell array of solver names, then, for

each value in Lambda, the software uses the

solutions of solver j as a warm start for solver

j + 1.

Example: {'sgd' 'lbfgs'} applies SGD to solve the

objective, and uses the solution as a warm start for

LBFGS.

Tip

SGD and ASGD can solve the objective function more quickly than other solvers, whereas LBFGS and SpaRSA can yield more accurate solutions than other solvers. Solver combinations like

{'sgd' 'lbfgs'}and{'sgd' 'sparsa'}can balance optimization speed and accuracy.When choosing between SGD and ASGD, consider that:

SGD takes less time per iteration, but requires more iterations to converge.

ASGD requires fewer iterations to converge, but takes more time per iteration.

If the predictor data is high-dimensional and

Regularizationis'ridge', setSolverto any of these combinations:'sgd''asgd''dual'ifLearneris'svm''lbfgs'{'sgd','lbfgs'}{'asgd','lbfgs'}{'dual','lbfgs'}ifLearneris'svm'

Although you can set other combinations, they often lead to solutions with poor accuracy.

If the predictor data is moderate through low-dimensional and

Regularizationis'ridge', setSolverto'bfgs'.If

Regularizationis'lasso', setSolverto any of these combinations:'sgd''asgd''sparsa'{'sgd','sparsa'}{'asgd','sparsa'}

Example: 'Solver',{'sgd','lbfgs'}

Initial linear coefficient estimates (β), specified as the comma-separated

pair consisting of 'Beta' and a p-dimensional

numeric vector or a p-by-L numeric matrix.

p is the number of predictor variables after dummy variables are

created for categorical variables (for more details, see

CategoricalPredictors), and L is the number

of regularization-strength values (for more details, see

Lambda).

If you specify a p-dimensional vector, then the software optimizes the objective function L times using this process.

The software optimizes using

Betaas the initial value and the minimum value ofLambdaas the regularization strength.The software optimizes again using the resulting estimate from the previous optimization as a warm start, and the next smallest value in

Lambdaas the regularization strength.The software implements step 2 until it exhausts all values in

Lambda.

If you specify a p-by-L matrix, then the software optimizes the objective function L times. At iteration

j, the software usesBeta(:,as the initial value and, after it sortsj)Lambdain ascending order, usesLambda(as the regularization strength.j)

If you set 'Solver','dual', then the software

ignores Beta.

Data Types: single | double

Initial intercept estimate (b), specified

as the comma-separated pair consisting of 'Bias' and

a numeric scalar or an L-dimensional numeric vector. L is

the number of regularization-strength values (for more details, see Lambda).

If you specify a scalar, then the software optimizes the objective function L times using this process.

The software optimizes using

Biasas the initial value and the minimum value ofLambdaas the regularization strength.The uses the resulting estimate as a warm start to the next optimization iteration, and uses the next smallest value in

Lambdaas the regularization strength.The software implements step 2 until it exhausts all values in

Lambda.

If you specify an L-dimensional vector, then the software optimizes the objective function L times. At iteration

j, the software usesBias(as the initial value and, after it sortsj)Lambdain ascending order, usesLambda(as the regularization strength.j)By default:

If

Learneris'logistic', then let gj be 1 ifY(is the positive class, and -1 otherwise.j)Biasis the weighted average of the g for training or, for cross-validation, in-fold observations.If

Learneris'svm', thenBiasis 0.

Data Types: single | double

Linear model intercept inclusion flag, specified as the comma-separated

pair consisting of 'FitBias' and true or false.

| Value | Description |

|---|---|

true | The software includes the bias term b in the linear model, and then estimates it. |

false | The software sets b = 0 during estimation. |

Example: 'FitBias',false

Data Types: logical

Flag to fit the linear model intercept after optimization, specified

as the comma-separated pair consisting of 'PostFitBias' and true or false.

| Value | Description |

|---|---|

false | The software estimates the bias term b and the coefficients β during optimization. |

true |

To estimate b, the software:

|

If you specify true, then FitBias must

be true.

Example: 'PostFitBias',true

Data Types: logical

Verbosity level, specified as the comma-separated pair consisting

of 'Verbose' and a nonnegative integer. Verbose controls

the amount of diagnostic information fitclinear displays

at the command line.

| Value | Description |

|---|---|

0 | fitclinear does not display diagnostic

information. |

1 | fitclinear periodically displays and

stores the value of the objective function, gradient magnitude, and

other diagnostic information. FitInfo.History contains

the diagnostic information. |

| Any other positive integer | fitclinear displays and stores diagnostic

information at each optimization iteration. FitInfo.History contains

the diagnostic information. |

Example: 'Verbose',1

Data Types: double | single

SGD and ASGD Solver Options

Mini-batch size, specified as the comma-separated pair consisting

of 'BatchSize' and a positive integer. At each

iteration, the software estimates the subgradient using BatchSize observations

from the training data.

If

Xis a numeric matrix, then the default value is10.If

Xis a sparse matrix, then the default value ismax([10,ceil(sqrt(ff))]), whereff = numel(X)/nnz(X)(the fullness factor ofX).

Example: 'BatchSize',100

Data Types: single | double

Learning rate, specified as the comma-separated pair consisting of 'LearnRate' and a positive scalar. LearnRate controls the optimization step size by scaling the subgradient.

If

Regularizationis'ridge', thenLearnRatespecifies the initial learning rate γ0.fitclineardetermines the learning rate for iteration t, γt, usingIf

Regularizationis'lasso', then, for all iterations,LearnRateis constant.

By default, LearnRate is 1/sqrt(1+max((sum(X.^2,obsDim)))), where obsDim is 1 if the observations compose the columns of the predictor data X, and 2 otherwise.

Example: 'LearnRate',0.01

Data Types: single | double

Flag to decrease the learning rate when the software detects

divergence (that is, over-stepping the minimum), specified as the

comma-separated pair consisting of 'OptimizeLearnRate' and true or false.

If OptimizeLearnRate is 'true',

then:

For the few optimization iterations, the software starts optimization using

LearnRateas the learning rate.If the value of the objective function increases, then the software restarts and uses half of the current value of the learning rate.

The software iterates step 2 until the objective function decreases.

Example: 'OptimizeLearnRate',true

Data Types: logical

Number of mini-batches between lasso truncation runs, specified

as the comma-separated pair consisting of 'TruncationPeriod' and

a positive integer.

After a truncation run, the software applies a soft threshold

to the linear coefficients. That is, after processing k = TruncationPeriod mini-batches,

the software truncates the estimated coefficient j using

For SGD, is the estimate of coefficient j after processing k mini-batches. γt is the learning rate at iteration t. λ is the value of

Lambda.For ASGD, is the averaged estimate coefficient j after processing k mini-batches,

If Regularization is 'ridge',

then the software ignores TruncationPeriod.

Example: 'TruncationPeriod',100

Data Types: single | double

Other Classification Options

Categorical predictors list, specified as one of the values in this table. The descriptions assume that the predictor data has observations in rows and predictors in columns.

| Value | Description |

|---|---|

| Vector of positive integers |

Each entry in the vector is an index value indicating that the corresponding predictor is

categorical. The index values are between 1 and If |

| Logical vector |

A |

| Character matrix | Each row of the matrix is the name of a predictor variable. The names must match the entries in PredictorNames. Pad the names with extra blanks so each row of the character matrix has the same length. |

| String array or cell array of character vectors | Each element in the array is the name of a predictor variable. The names must match the entries in PredictorNames. |

"all" | All predictors are categorical. |

By default, if the

predictor data is in a table (Tbl), fitclinear

assumes that a variable is categorical if it is a logical vector, categorical vector, character

array, string array, or cell array of character vectors. If the predictor data is a matrix

(X), fitclinear assumes that all predictors are

continuous. To identify any other predictors as categorical predictors, specify them by using

the CategoricalPredictors name-value argument.

For the identified categorical predictors, fitclinear creates

dummy variables using two different schemes, depending on whether a categorical variable

is unordered or ordered. For an unordered categorical variable,

fitclinear creates one dummy variable for each level of the

categorical variable. For an ordered categorical variable,

fitclinear creates one less dummy variable than the number of

categories. For details, see Automatic Creation of Dummy Variables.

Example: CategoricalPredictors="all"

Data Types: single | double | logical | char | string | cell

Names of classes to use for training, specified as a categorical, character, or string

array; a logical or numeric vector; or a cell array of character vectors.

ClassNames must have the same data type as the response variable

in Tbl or Y.

If ClassNames is a character array, then each element must correspond to one row of the array.

Use ClassNames to:

Specify the order of the classes during training.

Specify the order of any input or output argument dimension that corresponds to the class order. For example, use

ClassNamesto specify the order of the dimensions ofCostor the column order of classification scores returned bypredict.Select a subset of classes for training. For example, suppose that the set of all distinct class names in

Yis["a","b","c"]. To train the model using observations from classes"a"and"c"only, specifyClassNames=["a","c"].

The default value for ClassNames is the set of all distinct class names in the response variable in Tbl or Y.

Example: ClassNames=["b","g"]

Data Types: categorical | char | string | logical | single | double | cell

Misclassification cost, specified as the comma-separated pair consisting of

'Cost' and a square matrix or structure.

If you specify the square matrix

cost('Cost',cost), thencost(i,j)is the cost of classifying a point into classjif its true class isi. That is, the rows correspond to the true class, and the columns correspond to the predicted class. To specify the class order for the corresponding rows and columns ofcost, use theClassNamesname-value pair argument.If you specify the structure

S('Cost',S), then it must have two fields:S.ClassNames, which contains the class names as a variable of the same data type asYS.ClassificationCosts, which contains the cost matrix with rows and columns ordered as inS.ClassNames

The default value for Cost is

ones(, where K) –

eye(K)K is

the number of distinct classes.

fitclinear uses Cost to adjust the prior

class probabilities specified in Prior. Then,

fitclinear uses the adjusted prior probabilities for

training.

Example: 'Cost',[0 2; 1 0]

Data Types: single | double | struct

Predictor variable names, specified as a string array of unique names or cell array of unique

character vectors. The functionality of PredictorNames depends on the

way you supply the training data.

If you supply

XandY, then you can usePredictorNamesto assign names to the predictor variables inX.The order of the names in

PredictorNamesmust correspond to the predictor order inX. Assuming thatXhas the default orientation, with observations in rows and predictors in columns,PredictorNames{1}is the name ofX(:,1),PredictorNames{2}is the name ofX(:,2), and so on. Also,size(X,2)andnumel(PredictorNames)must be equal.By default,

PredictorNamesis{'x1','x2',...}.

If you supply

Tbl, then you can usePredictorNamesto choose which predictor variables to use in training. That is,fitclinearuses only the predictor variables inPredictorNamesand the response variable during training.PredictorNamesmust be a subset ofTbl.Properties.VariableNamesand cannot include the name of the response variable.By default,

PredictorNamescontains the names of all predictor variables.A good practice is to specify the predictors for training using either

PredictorNamesorformula, but not both.

Example: PredictorNames=["SepalLength","SepalWidth","PetalLength","PetalWidth"]

Data Types: string | cell

Prior probabilities for each class, specified as the comma-separated pair consisting

of 'Prior' and 'empirical',

'uniform', a numeric vector, or a structure array.

This table summarizes the available options for setting prior probabilities.

| Value | Description |

|---|---|

'empirical' | The class prior probabilities are the class relative frequencies

in Y. |

'uniform' | All class prior probabilities are equal to

1/K, where

K is the number of classes. |

| numeric vector | Each element is a class prior probability. Order the elements

according to their order in Y. If you specify

the order using the 'ClassNames' name-value

pair argument, then order the elements accordingly. |

| structure array |

A structure

|

fitclinear normalizes the prior probabilities in

Prior to sum to 1.

Example: 'Prior',struct('ClassNames',{{'setosa','versicolor'}},'ClassProbs',1:2)

Data Types: char | string | double | single | struct

Response variable name, specified as a character vector or string scalar.

If you supply

Y, then you can useResponseNameto specify a name for the response variable.If you supply

ResponseVarNameorformula, then you cannot useResponseName.

Example: ResponseName="response"

Data Types: char | string

Score transformation, specified as a character vector, string scalar, or function handle.

This table summarizes the available character vectors and string scalars.

| Value | Description |

|---|---|

"doublelogit" | 1/(1 + e–2x) |

"invlogit" | log(x / (1 – x)) |

"ismax" | Sets the score for the class with the largest score to 1, and sets the scores for all other classes to 0 |

"logit" | 1/(1 + e–x) |

"none" or "identity" | x (no transformation) |

"sign" | –1 for x < 0 0 for x = 0 1 for x > 0 |

"symmetric" | 2x – 1 |

"symmetricismax" | Sets the score for the class with the largest score to 1, and sets the scores for all other classes to –1 |

"symmetriclogit" | 2/(1 + e–x) – 1 |

For a MATLAB function or a function you define, use its function handle for the score transform. The function handle must accept a matrix (the original scores) and return a matrix of the same size (the transformed scores).

Example: ScoreTransform="logit"

Data Types: char | string | function_handle

Observation weights, specified as a nonnegative numeric vector or the name of a variable in Tbl. The software weights each observation in X or Tbl with the corresponding value in Weights. The length of Weights must equal the number of observations in X or Tbl.

If you specify the input data as a table Tbl, then Weights can be the name of a variable in Tbl that contains a numeric vector. In this case, you must specify Weights as a character vector or string scalar. For example, if the weights vector W is stored as Tbl.W, then specify it as 'W'. Otherwise, the software treats all columns of Tbl, including W, as predictors or the response variable when training the model.

By default, Weights is ones(n,1), where n is the number of observations in X or Tbl.

The software normalizes Weights to sum to the value of the prior

probability in the respective class. Inf weights are not supported.

Data Types: single | double | char | string

Cross-Validation Options

Cross-validation flag, specified as the comma-separated pair

consisting of 'Crossval' and 'on' or 'off'.

If you specify 'on', then the software implements

10-fold cross-validation.

To override this cross-validation setting, use one of these name-value pair arguments:

CVPartition, Holdout,

KFold, or Leaveout. To create a

cross-validated model, you can use one cross-validation name-value pair argument at a

time only.

Example: 'Crossval','on'

Cross-validation partition, specified as the comma-separated

pair consisting of 'CVPartition' and a cvpartition partition

object as created by cvpartition.

The partition object specifies the type of cross-validation, and also

the indexing for training and validation sets.

To create a cross-validated model, you can use one of these four options only:

CVPartition, Holdout,

KFold, or Leaveout.

Fraction of data used for holdout validation, specified as the

comma-separated pair consisting of 'Holdout' and

a scalar value in the range (0,1). If you specify 'Holdout',,

then the software: p

Randomly reserves

p*100Stores the compact, trained model in the

Trainedproperty of the cross-validated model.

To create a cross-validated model, you can use one of these four options only:

CVPartition, Holdout,

KFold, or Leaveout.

Example: 'Holdout',0.1

Data Types: double | single

Number of folds to use in a cross-validated classifier, specified

as the comma-separated pair consisting of 'KFold' and

a positive integer value greater than 1. If you specify, e.g., 'KFold',k,

then the software:

Randomly partitions the data into k sets

For each set, reserves the set as validation data, and trains the model using the other k – 1 sets

Stores the

kcompact, trained models in the cells of ak-by-1 cell vector in theTrainedproperty of the cross-validated model.

To create a cross-validated model, you can use one of these four options only:

CVPartition,

Holdout,

KFold, or

Leaveout.

Example: 'KFold',8

Data Types: single | double

Leave-one-out cross-validation, specified as "off" or "on".

If you specify Leaveout as "on", then for each

observation, fitclinear reserves the observation as test data, and

trains the model using the other observations.

You can use only one of these name-value arguments: CVPartition,

Holdout, KFold, or

Leaveout.

Example: Leaveout="on"

Data Types: single | double

SGD and ASGD Convergence Controls

Maximal number of batches to process, specified as the comma-separated

pair consisting of 'BatchLimit' and a positive

integer. When the software processes BatchLimit batches,

it terminates optimization.

By default:

If you specify

BatchLimit, thenfitclinearuses the argument that results in processing the fewest observations, eitherBatchLimitorPassLimit.

Example: 'BatchLimit',100

Data Types: single | double

Relative tolerance on the linear coefficients and the bias term (intercept), specified

as the comma-separated pair consisting of 'BetaTolerance' and a

nonnegative scalar.

Let , that is, the vector of the coefficients and the bias term at optimization iteration t. If , then optimization terminates.

If the software converges for the last solver specified in

Solver, then optimization terminates. Otherwise, the software uses

the next solver specified in Solver.

Example: 'BetaTolerance',1e-6

Data Types: single | double

Number of batches to process before next convergence check, specified as the

comma-separated pair consisting of 'NumCheckConvergence' and a

positive integer.

To specify the batch size, see BatchSize.

The software checks for convergence about 10 times per pass through the entire data set by default.

Example: 'NumCheckConvergence',100

Data Types: single | double

Maximal number of passes through the data, specified as the comma-separated pair consisting of 'PassLimit' and a positive integer.

fitclinear processes all observations when it completes one pass through the data.

When fitclinear passes through the data PassLimit times, it terminates optimization.

If you specify BatchLimit, then

fitclinear uses the argument that results in

processing the fewest observations, either

BatchLimit or

PassLimit.

Example: 'PassLimit',5

Data Types: single | double

Validation data for optimization convergence detection, specified as the comma-separated pair

consisting of 'ValidationData' and a cell array or table.

During optimization, the software periodically estimates the loss of ValidationData. If the validation-data loss increases, then the software terminates optimization. For more details, see Algorithms. To optimize hyperparameters using cross-validation, see cross-validation options such as CrossVal.

You can specify ValidationData as a table if you use a table

Tbl of predictor data that contains the response variable. In this

case, ValidationData must contain the same predictors and response

contained in Tbl. The software does not apply weights to observations,

even if Tbl contains a vector of weights. To specify weights, you must

specify ValidationData as a cell array.

If you specify ValidationData as a cell array, then it must have the following format:

ValidationData{1}must have the same data type and orientation as the predictor data. That is, if you use a predictor matrixX, thenValidationData{1}must be an m-by-p or p-by-m full or sparse matrix of predictor data that has the same orientation asX. The predictor variables in the training dataXandValidationData{1}must correspond. Similarly, if you use a predictor tableTblof predictor data, thenValidationData{1}must be a table containing the same predictor variables contained inTbl. The number of observations inValidationData{1}and the predictor data can vary.ValidationData{2}must match the data type and format of the response variable, eitherYorResponseVarName. IfValidationData{2}is an array of class labels, then it must have the same number of elements as the number of observations inValidationData{1}. The set of all distinct labels ofValidationData{2}must be a subset of all distinct labels ofY. IfValidationData{1}is a table, thenValidationData{2}can be the name of the response variable in the table. If you want to use the sameResponseVarNameorformula, you can specifyValidationData{2}as[].Optionally, you can specify

ValidationData{3}as an m-dimensional numeric vector of observation weights or the name of a variable in the tableValidationData{1}that contains observation weights. The software normalizes the weights with the validation data so that they sum to 1.

If you specify ValidationData and want to display the validation loss at

the command line, specify a value larger than 0 for Verbose.

If the software converges for the last solver specified in Solver, then optimization terminates. Otherwise, the software uses the next solver specified in Solver.

By default, the software does not detect convergence by monitoring validation-data loss.

Dual SGD Convergence Controls

Relative tolerance on the linear coefficients and the bias term (intercept), specified

as the comma-separated pair consisting of 'BetaTolerance' and a

nonnegative scalar.

Let , that is, the vector of the coefficients and the bias term at optimization iteration t. If , then optimization terminates.

If you also specify DeltaGradientTolerance, then optimization

terminates when the software satisfies either stopping criterion.

If the software converges for the last solver specified in

Solver, then optimization terminates. Otherwise, the software uses

the next solver specified in Solver.

Example: 'BetaTolerance',1e-6

Data Types: single | double

Gradient-difference tolerance between upper and lower pool Karush-Kuhn-Tucker (KKT) complementarity conditions violators, specified as a nonnegative scalar.

If the magnitude of the KKT violators is less than

DeltaGradientTolerance, then the software terminates optimization.If the software converges for the last solver specified in

Solver, then optimization terminates. Otherwise, the software uses the next solver specified inSolver.

Example: 'DeltaGradientTolerance',1e-2

Data Types: double | single

Number of passes through entire data set to process before next convergence check,

specified as the comma-separated pair consisting of

'NumCheckConvergence' and a positive integer.

Example: 'NumCheckConvergence',100

Data Types: single | double

Maximal number of passes through the data, specified as the

comma-separated pair consisting of 'PassLimit' and

a positive integer.

When the software completes one pass through the data, it has processed all observations.

When the software passes through the data PassLimit times,

it terminates optimization.

Example: 'PassLimit',5

Data Types: single | double

Validation data for optimization convergence detection, specified as the comma-separated pair

consisting of 'ValidationData' and a cell array or table.

During optimization, the software periodically estimates the loss of ValidationData. If the validation-data loss increases, then the software terminates optimization. For more details, see Algorithms. To optimize hyperparameters using cross-validation, see cross-validation options such as CrossVal.

You can specify ValidationData as a table if you use a table

Tbl of predictor data that contains the response variable. In this

case, ValidationData must contain the same predictors and response

contained in Tbl. The software does not apply weights to observations,

even if Tbl contains a vector of weights. To specify weights, you must

specify ValidationData as a cell array.

If you specify ValidationData as a cell array, then it must have the following format:

ValidationData{1}must have the same data type and orientation as the predictor data. That is, if you use a predictor matrixX, thenValidationData{1}must be an m-by-p or p-by-m full or sparse matrix of predictor data that has the same orientation asX. The predictor variables in the training dataXandValidationData{1}must correspond. Similarly, if you use a predictor tableTblof predictor data, thenValidationData{1}must be a table containing the same predictor variables contained inTbl. The number of observations inValidationData{1}and the predictor data can vary.ValidationData{2}must match the data type and format of the response variable, eitherYorResponseVarName. IfValidationData{2}is an array of class labels, then it must have the same number of elements as the number of observations inValidationData{1}. The set of all distinct labels ofValidationData{2}must be a subset of all distinct labels ofY. IfValidationData{1}is a table, thenValidationData{2}can be the name of the response variable in the table. If you want to use the sameResponseVarNameorformula, you can specifyValidationData{2}as[].Optionally, you can specify

ValidationData{3}as an m-dimensional numeric vector of observation weights or the name of a variable in the tableValidationData{1}that contains observation weights. The software normalizes the weights with the validation data so that they sum to 1.

If you specify ValidationData and want to display the validation loss at

the command line, specify a value larger than 0 for Verbose.

If the software converges for the last solver specified in Solver, then optimization terminates. Otherwise, the software uses the next solver specified in Solver.

By default, the software does not detect convergence by monitoring validation-data loss.

BFGS, LBFGS, and SpaRSA Convergence Controls

Relative tolerance on the linear coefficients and the bias term (intercept), specified as a nonnegative scalar.

Let , that is, the vector of the coefficients and the bias term at optimization iteration t. If , then optimization terminates.

If you also specify GradientTolerance, then optimization terminates when the software satisfies either stopping criterion.

If the software converges for the last solver specified in

Solver, then optimization terminates. Otherwise, the software uses

the next solver specified in Solver.

Example: 'BetaTolerance',1e-6

Data Types: single | double

Absolute gradient tolerance, specified as a nonnegative scalar.

Let be the gradient vector of the objective function with respect to the coefficients and bias term at optimization iteration t. If , then optimization terminates.

If you also specify BetaTolerance, then optimization terminates when the

software satisfies either stopping criterion.

If the software converges for the last solver specified in the

software, then optimization terminates. Otherwise, the software uses

the next solver specified in Solver.

Example: 'GradientTolerance',1e-5

Data Types: single | double

Size of history buffer for Hessian approximation, specified

as the comma-separated pair consisting of 'HessianHistorySize' and

a positive integer. That is, at each iteration, the software composes

the Hessian using statistics from the latest HessianHistorySize iterations.

The software does not support 'HessianHistorySize' for

SpaRSA.

Example: 'HessianHistorySize',10

Data Types: single | double

Maximal number of optimization iterations, specified as the

comma-separated pair consisting of 'IterationLimit' and

a positive integer. IterationLimit applies to these

values of Solver: 'bfgs', 'lbfgs',

and 'sparsa'.

Example: 'IterationLimit',500

Data Types: single | double

Validation data for optimization convergence detection, specified as the comma-separated pair

consisting of 'ValidationData' and a cell array or table.

During optimization, the software periodically estimates the loss of ValidationData. If the validation-data loss increases, then the software terminates optimization. For more details, see Algorithms. To optimize hyperparameters using cross-validation, see cross-validation options such as CrossVal.

You can specify ValidationData as a table if you use a table

Tbl of predictor data that contains the response variable. In this

case, ValidationData must contain the same predictors and response

contained in Tbl. The software does not apply weights to observations,

even if Tbl contains a vector of weights. To specify weights, you must

specify ValidationData as a cell array.

If you specify ValidationData as a cell array, then it must have the following format:

ValidationData{1}must have the same data type and orientation as the predictor data. That is, if you use a predictor matrixX, thenValidationData{1}must be an m-by-p or p-by-m full or sparse matrix of predictor data that has the same orientation asX. The predictor variables in the training dataXandValidationData{1}must correspond. Similarly, if you use a predictor tableTblof predictor data, thenValidationData{1}must be a table containing the same predictor variables contained inTbl. The number of observations inValidationData{1}and the predictor data can vary.ValidationData{2}must match the data type and format of the response variable, eitherYorResponseVarName. IfValidationData{2}is an array of class labels, then it must have the same number of elements as the number of observations inValidationData{1}. The set of all distinct labels ofValidationData{2}must be a subset of all distinct labels ofY. IfValidationData{1}is a table, thenValidationData{2}can be the name of the response variable in the table. If you want to use the sameResponseVarNameorformula, you can specifyValidationData{2}as[].Optionally, you can specify

ValidationData{3}as an m-dimensional numeric vector of observation weights or the name of a variable in the tableValidationData{1}that contains observation weights. The software normalizes the weights with the validation data so that they sum to 1.

If you specify ValidationData and want to display the validation loss at

the command line, specify a value larger than 0 for Verbose.

If the software converges for the last solver specified in Solver, then optimization terminates. Otherwise, the software uses the next solver specified in Solver.

By default, the software does not detect convergence by monitoring validation-data loss.

Hyperparameter Optimization

Parameters to optimize, specified as the comma-separated pair consisting of 'OptimizeHyperparameters' and one of the following:

'none'— Do not optimize.'auto'— Use{'Lambda','Learner'}.'all'— Optimize all eligible parameters.String array or cell array of eligible parameter names.

Vector of

optimizableVariableobjects, typically the output ofhyperparameters.

The optimization attempts to minimize the cross-validation loss

(error) for fitclinear by varying the parameters. To control the

cross-validation type and other aspects of the optimization, use the

HyperparameterOptimizationOptions name-value argument. When you use

HyperparameterOptimizationOptions, you can use the (compact) model size

instead of the cross-validation loss as the optimization objective by setting the

ConstraintType and ConstraintBounds options.

Note

The values of OptimizeHyperparameters override any values you

specify using other name-value arguments. For example, setting

OptimizeHyperparameters to "auto" causes

fitclinear to optimize hyperparameters corresponding to the

"auto" option and to ignore any specified values for the

hyperparameters.

The eligible parameters for fitclinear are:

Lambda—fitclinearsearches among positive values, by default log-scaled in the range[1e-5/NumObservations,1e5/NumObservations].Learner—fitclinearsearches among'svm'and'logistic'.Regularization—fitclinearsearches among'ridge'and'lasso'.When

Regularizationis'ridge', the function sets theSolvervalue to'lbfgs'by default.When

Regularizationis'lasso', the function sets theSolvervalue to'sparsa'by default.

Set nondefault parameters by passing a vector of optimizableVariable objects that have nondefault values. For example,

load fisheriris params = hyperparameters('fitclinear',meas,species); params(1).Range = [1e-4,1e6];

Pass params as the value of OptimizeHyperparameters.

By default, the iterative display appears at the command line,

and plots appear according to the number of hyperparameters in the optimization. For the

optimization and plots, the objective function is the misclassification rate. To control the

iterative display, set the Verbose option of the

HyperparameterOptimizationOptions name-value argument. To control the

plots, set the ShowPlots field of the

HyperparameterOptimizationOptions name-value argument.

For an example, see Optimize Linear Classifier.

Example: 'OptimizeHyperparameters','auto'

Options for optimization, specified as a HyperparameterOptimizationOptions object or a structure. This argument modifies the effect of the OptimizeHyperparameters name-value argument. If you specify HyperparameterOptimizationOptions, you must also specify OptimizeHyperparameters. All the options are optional. However, you must set ConstraintBounds and ConstraintType to return AggregateOptimizationResults. The options that you can set in a structure are the same as those in the HyperparameterOptimizationOptions object.

| Option | Values | Default |

|---|---|---|

Optimizer |

| "bayesopt" |

ConstraintBounds | Constraint bounds for N optimization problems, specified as an N-by-2 numeric matrix or | [] |

ConstraintTarget | Constraint target for the optimization problems, specified as | If you specify ConstraintBounds and ConstraintType, then the default value is "matlab". Otherwise, the default value is []. |

ConstraintType | Constraint type for the optimization problems, specified as | [] |

AcquisitionFunctionName | Type of acquisition function:

Acquisition functions whose names include | "expected-improvement-per-second-plus" |

LossFun | Type of validation loss to optimize, specified as "auto",

"classifcost", or "classiferror". In

the case of fitclinear, the "auto" and

"classiferror" options are equivalent, and the software

uses the misclassified rate in decimal. "classifcost"

indicates to use the observed misclassification cost. | "auto" |

MaxObjectiveEvaluations | Maximum number of objective function evaluations. If you specify multiple optimization problems using ConstraintBounds, the value of MaxObjectiveEvaluations applies to each optimization problem individually. | 30 for "bayesopt" and "randomsearch", and the entire grid for "gridsearch" |

MaxTime | Time limit for the optimization, specified as a nonnegative real scalar. The time limit is in seconds, as measured by | Inf |

NumGridDivisions | For Optimizer="gridsearch", the number of values in each dimension. The value can be a vector of positive integers giving the number of values for each dimension, or a scalar that applies to all dimensions. The software ignores this option for categorical variables. | 10 |

ShowPlots | Logical value indicating whether to show plots of the optimization progress. If this option

is true, the software plots the best observed objective

function value against the iteration number. If you use Bayesian optimization

(Optimizer="bayesopt"), the software

also plots the best estimated objective function value. The best observed

objective function values and best estimated objective function values

correspond to the values in the BestSoFar (observed) and

BestSoFar (estim.) columns of the iterative display,

respectively. You can find these values in the properties ObjectiveMinimumTrace and EstimatedObjectiveMinimumTrace of the

SupervisedLearningBayesianOptimization object. If the

problem includes one or two optimization parameters for Bayesian optimization,

then ShowPlots also plots a model of the objective function

against the parameters. | true |

SaveIntermediateResults | Logical value indicating whether to save the optimization results. If this

option is true, the software overwrites a workspace variable

named SupervisedLearningBayesoptResults at each iteration.

The variable is a SupervisedLearningBayesianOptimization object. If you specify

multiple optimization problems using ConstraintBounds, the

workspace variable is an AggregateBayesianOptimization object named

AggregateBayesoptResults. | false |

Verbose | Display level at the command line:

For details, see the | 1 |

UseParallel | Logical value indicating whether to run the Bayesian optimization in parallel, which requires Parallel Computing Toolbox™. Due to the nonreproducibility of parallel timing, parallel Bayesian optimization does not necessarily yield reproducible results. For details, see Parallel Bayesian Optimization. | false |

Repartition | Logical value indicating whether to repartition the cross-validation at every iteration. If this option is A value of | false |

| Specify only one of the following three options. | ||

CVPartition | cvpartition object created by cvpartition | KFold=5 if you do not specify a cross-validation option |

Holdout | Scalar in the range (0,1) representing the holdout fraction | |

KFold | Integer greater than 1 | |

Example: HyperparameterOptimizationOptions=struct(UseParallel=true)

Output Arguments

Trained linear classification model, returned as a ClassificationLinear object, a

ClassificationPartitionedLinear

object, or a cell array of model objects.

If you set any of the name-value arguments

CrossVal,CVPartition,Holdout,KFold, orLeaveout, thenMdlis aClassificationPartitionedLinearobject.If you specify

OptimizeHyperparametersand set theConstraintTypeandConstraintBoundsoptions ofHyperparameterOptimizationOptions, thenMdlis an N-by-1 cell array of model objects, where N is equal to the number of rows inConstraintBounds. If none of the optimization problems yields a feasible model, then each cell array value is[].Otherwise,

Mdlis aClassificationLinearmodel object.

To reference properties of Mdl, use dot

notation.

Note

Unlike other classification models, and for economical memory usage,

ClassificationLinear and

ClassificationPartitionedLinear model objects do

not store the training data or training process details (for example,

convergence history).

Aggregate optimization results for multiple optimization problems, returned as an AggregateBayesianOptimization object. To return

AggregateOptimizationResults, you must specify

OptimizeHyperparameters and

HyperparameterOptimizationOptions. You must also specify the

ConstraintType and ConstraintBounds

options of HyperparameterOptimizationOptions. For an example that

shows how to produce this output, see Hyperparameter Optimization with Multiple Constraint Bounds.

Optimization details, returned as a structure array.

Fields specify final values or name-value pair argument specifications,

for example, Objective is the value of the objective

function when optimization terminates. Rows of multidimensional fields

correspond to values of Lambda and columns correspond

to values of Solver.

This table describes some notable fields.

| Field | Description | ||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

TerminationStatus |

| ||||||||||||||

FitTime | Elapsed, wall-clock time in seconds | ||||||||||||||

History | A structure array of optimization information for each

iteration. The field

|

To access fields, use dot notation.

For example, to access the vector of objective function values for

each iteration, enter FitInfo.History.Objective.

If you specify

OptimizeHyperparameters and set the

ConstraintType and ConstraintBounds

options of HyperparameterOptimizationOptions, then

Fitinfo is an N-by-1 cell array of structure

arrays, where N is equal to the number of rows in

ConstraintBounds.

It is good practice to examine FitInfo to

assess whether convergence is satisfactory.

Cross-validation optimization of hyperparameters, returned as a SupervisedLearningBayesianOptimization object, an AggregateBayesianOptimization object, or a table of hyperparameters and

associated values. The output is nonempty when

OptimizeHyperparameters has a value other than

"none".

If you set the ConstraintType and

ConstraintBounds options in

HyperparameterOptimizationOptions, then

HyperparameterOptimizationResults is an AggregateBayesianOptimization object. Otherwise, the value of

HyperparameterOptimizationResults depends on the value of the

Optimizer option in

HyperparameterOptimizationOptions.

Value of Optimizer Option | Value of HyperparameterOptimizationResults |

|---|---|

"bayesopt" (default) | SupervisedLearningBayesianOptimization object |

"gridsearch" or "randomsearch" | Table of hyperparameters used, observed objective function values (cross-validation loss), and observation ranks from lowest (best) to highest (worst) |

More About

A warm start is initial estimates of the beta coefficients and bias term supplied to an optimization routine for quicker convergence.

fitclinear and fitrlinear minimize

objective functions relatively quickly for a high-dimensional linear model at the cost of

some accuracy and with the restriction that the model must be linear with respect to the

parameters. If your predictor data set is low- to medium-dimensional, you can use an

alternative classification or regression fitting function. To help you decide which fitting

function is appropriate for your data set, use this table.

| Model to Fit | Function | Notable Algorithmic Differences |

|---|---|---|

| SVM |

| |

| Linear regression |

| |

| Logistic regression |

|

Tips

It is a best practice to orient your predictor matrix so that observations correspond to columns and to specify

'ObservationsIn','columns'. As a result, you can experience a significant reduction in optimization-execution time.If your predictor data has few observations but many predictor variables, then:

Specify

'PostFitBias',true.For SGD or ASGD solvers, set

PassLimitto a positive integer that is greater than 1, for example, 5 or 10. This setting often results in better accuracy.

For SGD and ASGD solvers,

BatchSizeaffects the rate of convergence.If

BatchSizeis too small, thenfitclinearachieves the minimum in many iterations, but computes the gradient per iteration quickly.If

BatchSizeis too large, thenfitclinearachieves the minimum in fewer iterations, but computes the gradient per iteration slowly.

Large learning rates (see

LearnRate) speed up convergence to the minimum, but can lead to divergence (that is, over-stepping the minimum). Small learning rates ensure convergence to the minimum, but can lead to slow termination.When using lasso penalties, experiment with various values of

TruncationPeriod. For example, setTruncationPeriodto1,10, and then100.For efficiency,

fitclineardoes not standardize predictor data. To standardizeXwhere you orient the observations as the columns, enterX = normalize(X,2);

If you orient the observations as the rows, enter

X = normalize(X);

For memory-usage economy, the code replaces the original predictor data the standardized data.

After training a model, you can generate C/C++ code that predicts labels for new data. Generating C/C++ code requires MATLAB Coder™. For details, see Introduction to Code Generation for Statistics and Machine Learning Functions.

Algorithms

If you specify

ValidationData, then, during objective-function optimization:fitclinearestimates the validation loss ofValidationDataperiodically using the current model, and tracks the minimal estimate.When

fitclinearestimates a validation loss, it compares the estimate to the minimal estimate.When subsequent, validation loss estimates exceed the minimal estimate five times,

fitclinearterminates optimization.

If you specify

ValidationDataand to implement a cross-validation routine (CrossVal,CVPartition,Holdout, orKFold), then:fitclinearrandomly partitionsXandY(orTbl) according to the cross-validation routine that you choose.fitclineartrains the model using the training-data partition. During objective-function optimization,fitclinearusesValidationDataas another possible way to terminate optimization (for details, see the previous bullet).Once

fitclinearsatisfies a stopping criterion, it constructs a trained model based on the optimized linear coefficients and intercept.If you implement k-fold cross-validation, and

fitclinearhas not exhausted all training-set folds, thenfitclinearreturns to Step 2 to train using the next training-set fold.Otherwise,

fitclinearterminates training, and then returns the cross-validated model.

You can determine the quality of the cross-validated model. For example:

To determine the validation loss using the holdout or out-of-fold data from step 1, pass the cross-validated model to

kfoldLoss.To predict observations on the holdout or out-of-fold data from step 1, pass the cross-validated model to

kfoldPredict.

If you specify the

Cost,Prior, andWeightsname-value arguments, the output model object stores the specified values in theCost,Prior, andWproperties, respectively. TheCostproperty stores the user-specified cost matrix (C) without modification. ThePriorandWproperties store the prior probabilities and observation weights, respectively, after normalization. For model training, the software updates the prior probabilities and observation weights to incorporate the penalties described in the cost matrix. For details, see Misclassification Cost Matrix, Prior Probabilities, and Observation Weights.

References

[1] Hsieh, C. J., K. W. Chang, C. J. Lin, S. S. Keerthi, and S. Sundararajan. “A Dual Coordinate Descent Method for Large-Scale Linear SVM.” Proceedings of the 25th International Conference on Machine Learning, ICML ’08, 2001, pp. 408–415.

[2] Langford, J., L. Li, and T. Zhang. “Sparse Online Learning Via Truncated Gradient.” J. Mach. Learn. Res., Vol. 10, 2009, pp. 777–801.

[3] Nocedal, J. and S. J. Wright. Numerical Optimization, 2nd ed., New York: Springer, 2006.

[4] Shalev-Shwartz, S., Y. Singer, and N. Srebro. “Pegasos: Primal Estimated Sub-Gradient Solver for SVM.” Proceedings of the 24th International Conference on Machine Learning, ICML ’07, 2007, pp. 807–814.

[5] Wright, S. J., R. D. Nowak, and M. A. T. Figueiredo. “Sparse Reconstruction by Separable Approximation.” Trans. Sig. Proc., Vol. 57, No 7, 2009, pp. 2479–2493.

[6] Xiao, Lin. “Dual Averaging Methods for Regularized Stochastic Learning and Online Optimization.” J. Mach. Learn. Res., Vol. 11, 2010, pp. 2543–2596.

[7] Xu, Wei. “Towards Optimal One Pass Large Scale Learning with Averaged Stochastic Gradient Descent.” CoRR, abs/1107.2490, 2011.

Extended Capabilities

The

fitclinear function supports tall arrays with the following usage

notes and limitations:

fitclineardoes not support talltabledata.Some name-value pair arguments have different defaults compared to the default values for the in-memory

fitclinearfunction. Supported name-value pair arguments, and any differences, are:'ObservationsIn'— Supports only'rows'.'Lambda'— Can be'auto'(default) or a scalar.'Learner''Regularization'— Supports only'ridge'.'Solver'— Supports only'lbfgs'.'FitBias'— Supports onlytrue.'Verbose'— Default value is1.'Beta''Bias''ClassNames''Cost''Prior''Weights'— Value must be a tall array.'HessianHistorySize''BetaTolerance'— Default value is relaxed to1e–3.'GradientTolerance'— Default value is relaxed to1e–3.'IterationLimit'— Default value is relaxed to20.'OptimizeHyperparameters'— Value of'Regularization'parameter must be'ridge'.HyperparameterOptimizationOptions— For cross-validation, tall optimization supports onlyHoldoutvalidation. By default, the software selects and reserves 20% of the data as holdout validation data, and trains the model using the rest of the data. You can specify a different value for the holdout fraction by using this argument. For example, specifyHyperparameterOptimizationOptions=struct(Holdout=0.3)to reserve 30% of the data as validation data.

For tall arrays,

fitclinearimplements LBFGS by distributing the calculation of the loss and gradient among different parts of the tall array at each iteration. Other solvers are not available for tall arrays.When initial values for

BetaandBiasare not given,fitclinearrefines the initial estimates of the parameters by fitting the model locally to parts of the data and combining the coefficients by averaging.

For more information, see Tall Arrays.

To perform parallel hyperparameter optimization, use the UseParallel=true

option in the HyperparameterOptimizationOptions name-value argument in

the call to the fitclinear function.

For more information on parallel hyperparameter optimization, see Parallel Bayesian Optimization.

For general information about parallel computing, see Run MATLAB Functions with Automatic Parallel Support (Parallel Computing Toolbox).

The fitclinear function

supports GPU array input with these usage notes and limitations:

Xcannot be sparse.You cannot specify the

Solvername-value argument as"sgd","asgd", or"dual". ForgpuArrayinputs, the default solver is:"bfgs"when you specify a ridge penalty and fewer than101predictor variables"lbfgs"when you specify a ridge penalty and more than100predictor variables"sparsa"when you specify a lasso penalty

fitclinearfits the model on a GPU if one of the following applies:The input argument

Xis agpuArrayobject.The input argument

TblcontainsgpuArraypredictor variables.

Version History

Introduced in R2016aYou can optimize classification model hyperparameters with respect to

misclassification cost. When you use a classification fit function, specify the

OptimizeHyperparameters and

HyperparameterOptimizationOptions name-value arguments. In

the HyperparameterOptimizationOptions structure or object, set