importKerasLayers

(To be removed) Import layers from Keras network

importKerasLayers will be removed in a future release. Use importNetworkFromTensorFlow instead. (since R2023b) For more information about

updating your code, see Version History.

Description

Add-On Required: This feature requires the Deep Learning Toolbox Converter for TensorFlow Models add-on.

layers = importKerasLayers(modelfile).h5) or JSON (.json) file given by the file

name modelfile.

This function requires the Deep Learning Toolbox™ Converter for TensorFlow Models support package. If this support package is not installed, then the function provides a download link.

layers = importKerasLayers(modelfile,Name,Value)

For example, importKerasLayers(modelfile,'ImportWeights',true)

imports the network layers and the weights from the model file

modelfile.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Limitations

importKerasLayerssupports TensorFlow-Keras versions as follows:The function fully supports TensorFlow-Keras versions up to 2.2.4.

The function offers limited support for TensorFlow-Keras versions 2.2.5 to 2.4.0.

More About

Tips

If the network contains a layer that Deep Learning Toolbox Converter for TensorFlow Models does not support (see Supported Keras Layers), then

importKerasLayersinserts a placeholder layer in place of the unsupported layer. To find the names and indices of the unsupported layers in the network, use thefindPlaceholderLayersfunction. You then can replace a placeholder layer with a new layer that you define. To replace a layer, usereplaceLayer.You can replace a placeholder layer with a new layer that you define.

If the network is a series network, then replace the layer in the array directly. For example,

layer(2) = newlayer;.If the network is a DAG network, then replace the layer using

replaceLayer.

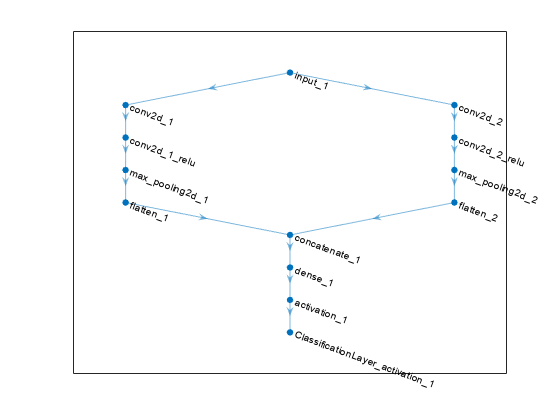

You can import a Keras network with multiple inputs and multiple outputs (MIMO). Use

importKerasNetworkif the network includes input size information for the inputs and loss information for the outputs. Otherwise, useimportKerasLayers. TheimportKerasLayersfunction inserts placeholder layers for the inputs and outputs. After importing, you can find and replace the placeholder layers by usingfindPlaceholderLayersandreplaceLayer, respectively. To learn about a deep learning network with multiple inputs and multiple outputs, see Multiple-Input and Multiple-Output Networks.To use a pretrained network for prediction or transfer learning on new images, you must preprocess your images in the same way as the images that you use to train the imported model. The most common preprocessing steps are resizing images, subtracting image average values, and converting the images from BGR format to RGB format.

For more information about preprocessing images for training and prediction, see Preprocess Images for Deep Learning.

MATLAB uses one-based indexing, whereas Python® uses zero-based indexing. In other words, the first element in an array has an index of 1 and 0 in MATLAB and Python, respectively. For more information about MATLAB indexing, see Array Indexing. In MATLAB, to use an array of indices (

ind) created in Python, convert the array toind+1.For more tips, see Tips on Importing Models from TensorFlow, PyTorch, and ONNX.

Alternative Functionality

Use

importKerasNetworkorimportKerasLayersto import a TensorFlow-Keras network in HDF5 or JSON format. If the TensorFlow network is in the saved model format, useimportTensorFlowNetworkorimportTensorFlowLayers.If you import a custom TensorFlow-Keras layer or if the software cannot convert a TensorFlow-Keras layer into an equivalent built-in MATLAB layer, you can use

importTensorFlowNetworkorimportTensorFlowLayers, which try to generate a custom layer. For example,importTensorFlowNetworkandimportTensorFlowLayersgenerate a custom layer when you import a TensorFlow-KerasLambdalayer.

References

[1] Keras: The Python Deep Learning library. https://keras.io.

Version History

Introduced in R2017bSee Also

trainnet | trainingOptions | dlnetwork | importNetworkFromTensorFlow | importKerasNetwork | importTensorFlowLayers | importONNXLayers | importTensorFlowNetwork | importNetworkFromPyTorch | findPlaceholderLayers | replaceLayer | importONNXNetwork | exportNetworkToTensorFlow | exportONNXNetwork