Get Started with Computer Vision Toolbox

Computer Vision Toolbox™ provides algorithms and apps for designing and testing computer vision systems. You can perform visual inspection, object detection and tracking, as well as feature detection, extraction, and matching. You can automate calibration workflows for single, fisheye, stereo, and multi-camera configurations. For 3D vision, the toolbox supports stereo vision, point cloud processing, structure from motion, and real-time visual and point cloud SLAM. Computer vision apps enable team-based ground truth labeling with automation, as well as camera calibration.

The toolbox provides a variety of AI techniques including pretrained convolutional neural networks (CNNs), vision transformers, and vision-language models. Use the out-of-the-box models for tasks like image classification, object detection, segmentation, pose estimation, captioning, and optical character recognition (OCR), or further customize them through transfer learning.

You can generate code in C, C++, for GPU execution, and in hardware description languages (HDL).

Tutorials

- What Is Camera Calibration?

Estimate the parameters of a lens and image sensor of an image or video camera.

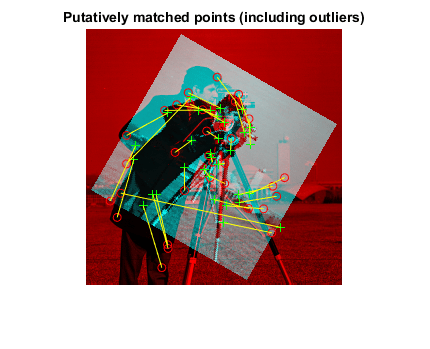

- What Is Structure from Motion?

Estimate three-dimensional structures from two-dimensional image sequences.

- Get Started with Object Detection Using Deep Learning

Perform object detection using deep learning neural networks such as YOLOX, YOLO v4, RTMDet, and SSD.

- Get Started with Semantic Segmentation Using Deep Learning

Segment objects by class using deep learning networks such as U-Net and DeepLab v3+.

- Get Started with Code Generation, Deployment, GPU, and OpenCV Support

C/C++ and GPU code generation and acceleration, HDL code generation, and OpenCV interface for MATLAB and Simulink.

- Computer Vision Toolbox with Simulink

Simulink® support for computer vision applications.

App and Workflow Decision Guides

- Choose an App to Label Ground Truth Data

Decide which app to use to label ground truth data: Image Labeler, Video Labeler, Ground Truth Labeler, Lidar Labeler, Signal Labeler, or Medical Image Labeler.

- Choose an Object Detector

Compare object detection deep learning models, such as YOLOX, YOLO v4, RTMDet, and SSD.

- Choose SLAM Workflow Based on Sensor Data

Choose the right simultaneous localization and mapping (SLAM) workflow and find topics, examples, and supported features.

- Choose a Point Cloud Viewer

Compare visualization functions.

Featured Examples

Interactive Learning

Computer Vision Onramp

Learn how to use Computer Vision Toolbox for object detection and

tracking.

Videos

What Is Computer Vision?

Discover how computer vision can be applied to a wide variety of

application areas such as object detection, tracking, and

recognition.

Camera Calibration in MATLAB

Automate checkerboard detection and calibrate pinhole and fisheye cameras

using the Camera Calibrator app

Teaching Resources

Computer Vision Basics

Learn the fundamentals of image segmentation in computer vision.