matchFeaturesInRadius

Find matching features within specified radius

Syntax

Description

indexPairs = matchFeaturesInRadius(features1,features2,points2,centerPoints,radius)

[

also returns the distance between the features in a matched pair in

indexPairs,matchMetric]

= matchFeaturesInRadius(___)indexPairs.

[

specifies options using one or more name-value arguments in addition of the input arguments

in previous syntaxes.indexPairs,matchMetric]

= matchFeaturesInRadius(___,Name,Value)

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Tips

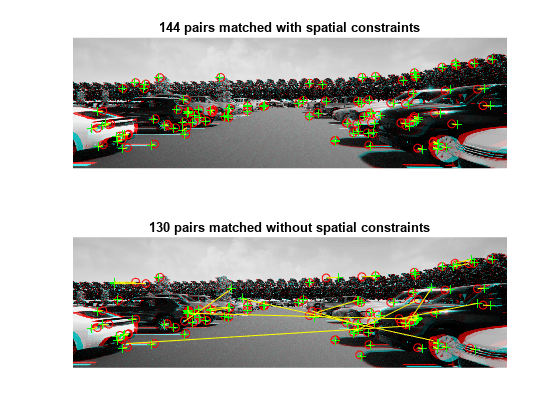

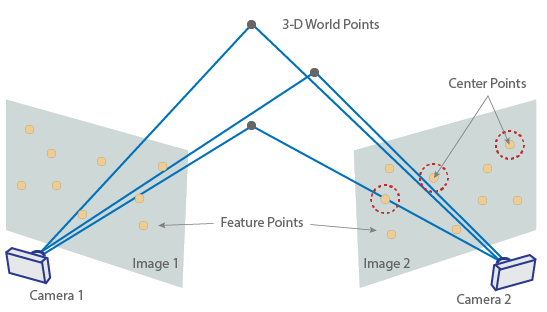

Use this function when the 3-D world points that correspond to feature set one

features1, are known.centerPointscan be obtained by projecting a 3-D world point onto the second image. You can obtain the 3-D world points by triangulating matched image points from two stereo images.You can specify a circular area of points in feature set two to match with feature set one. Specify the origin as

centerPointswith a radius specified byradius. Specify the points to match from feature set two aspoints2 .

.

References

[1] Fraundorfer, Friedrich, and Davide Scaramuzza. “Visual Odometry: Part II: Matching, Robustness, Optimization, and Applications.” IEEE Robotics & Automation Magazine 19, no. 2 (June 2012): 78–90. https://doi.org/10.1109/MRA.2012.2182810.

[2] Lowe, David G. “Distinctive Image Features from Scale-Invariant Keypoints.” International Journal of Computer Vision 60, no. 2 (November 2004): 91–110. https://doi.org/10.1023/B:VISI.0000029664.99615.94.

[3] Muja, Marius, and David G. Lowe. “Fast Approximate Nearest Neighbors With Automatic Algorithm Configuration:” In Proceedings of the Fourth International Conference on Computer Vision Theory and Applications, 331–40. Lisboa, Portugal: SciTePress - Science and Technology Publications, 2009. https://doi.org/10.5220/0001787803310340.

[4] Muja, Marius, and David G. Lowe. "Fast Matching of Binary Features." In 2012 Ninth Conference on Computer and Robot Vision, 404–10. New York: Institute of Electrical and Electronics Engineers, 2012. https://doi.org/10.1109/CRV.2012.60.

Extended Capabilities

Version History

Introduced in R2021a

See Also

Functions

showMatchedFeatures|extractFeatures|detectHarrisFeatures|detectSURFFeatures|detectORBFeatures|detectFASTFeatures|detectBRISKFeatures|detectMinEigenFeatures|estimateFundamentalMatrix|estimateGeometricTransform|detectMSERFeatures|estimateWorldCameraPose|worldToImage