Surround Vehicle Sensor Fusion

This example shows how to implement a synthetic data simulation to detect vehicles using multiple vision and radar sensors, and generate fused tracks for surround view analysis in Simulink® with Automated Driving Toolbox™. It also shows how to use quantitative analysis tools in Sensor Fusion and Tracking Toolbox™ for assessing the performance of a tracker.

Introduction

Sensor fusion and tracking is a fundamental perception component of automated driving applications. An autonomous vehicle uses many onboard sensors to understand the world around it. Each of the sensors the vehicle uses for self-driving applications, such as radar, camera, and lidar sensors has its own limitations. The goal of sensor fusion and tracking is to take the inputs of different sensors and sensor types, and use the combined information to perceive the environment more accurately. Any cutting-edge autonomous driving system that can make critical decisions, such as highway lane following or highway lane change, strongly relies on sensor fusion and tracking. As such, you must test the design of sensor fusion and tracking systems using a component level model. This model enables you to test critical scenarios that are difficult to test in real time.

This example shows how to fuse and track the detections from multiple vision detection sensors and a radar sensor. The sensors are mounted on the ego vehicle such that they provide 360 degree coverage around the ego vehicle. The example clusters radar detections, fuses them with vision detections, and tracks the detections using a joint probabilistic data association (JPDA) multi-object tracker. The example also shows how to evaluate the tracker performance using the generalized optimal subpattern assignment (GOSPA) metric for a set of predefined scenarios in an open-loop environment. In this example, you:

Explore the test bench model — The model contains sensors, sensor fusion and tracking algorithm, and metrics to assess functionality. Detection generators from a driving scenario are used to model detections from a radar and vision sensor.

Configure sensors and environment — Set up a driving scenario that includes an ego vehicle with a camera and a radar sensor. Plot the coverage area of each sensor using Bird's-Eye Scope.

Perform sensor fusion and tracking — Cluster radar detections, fuse them with vision detections, and track the detections using a JPDA multi-object tracker.

Evaluate performance of tracker — Use the GOSPA metric to evaluate the performance of the tracker.

Simulate the test bench model and analyze the results — The model configures a scenario with multiple target vehicles surrounding an ego vehicle that performs lane change maneuvers. Simulate the model, and analyze the components of the GOSPA metric to understand the performance of tracker.

Explore other scenarios — These scenarios test the system under additional conditions.

Explore Test Bench Model

This example uses both a test bench model and a reference model of surround vehicle sensor fusion. The test bench model simulates and tests the behavior of the fusion and tracking algorithm in an open loop. The reference model implements the sensor fusion and tracking algorithm.

To explore the test bench model, load the surround vehicle sensor fusion project.

openProject("SVSensorFusion");

Open the test bench model.

open_system("SurroundVehicleSensorFusionTestBench");

Opening this model runs the helperSLSurroundVehicleSensorFusionSetup script, which initializes the road scenario using the drivingScenario object in the base workspace. The script also configures the sensor parameters, tracker parameters, and the Simulink bus signals required to define the inputs and outputs for the SurroundVehicleSensorFusionTestBench model. The test bench model contains these subsystems:

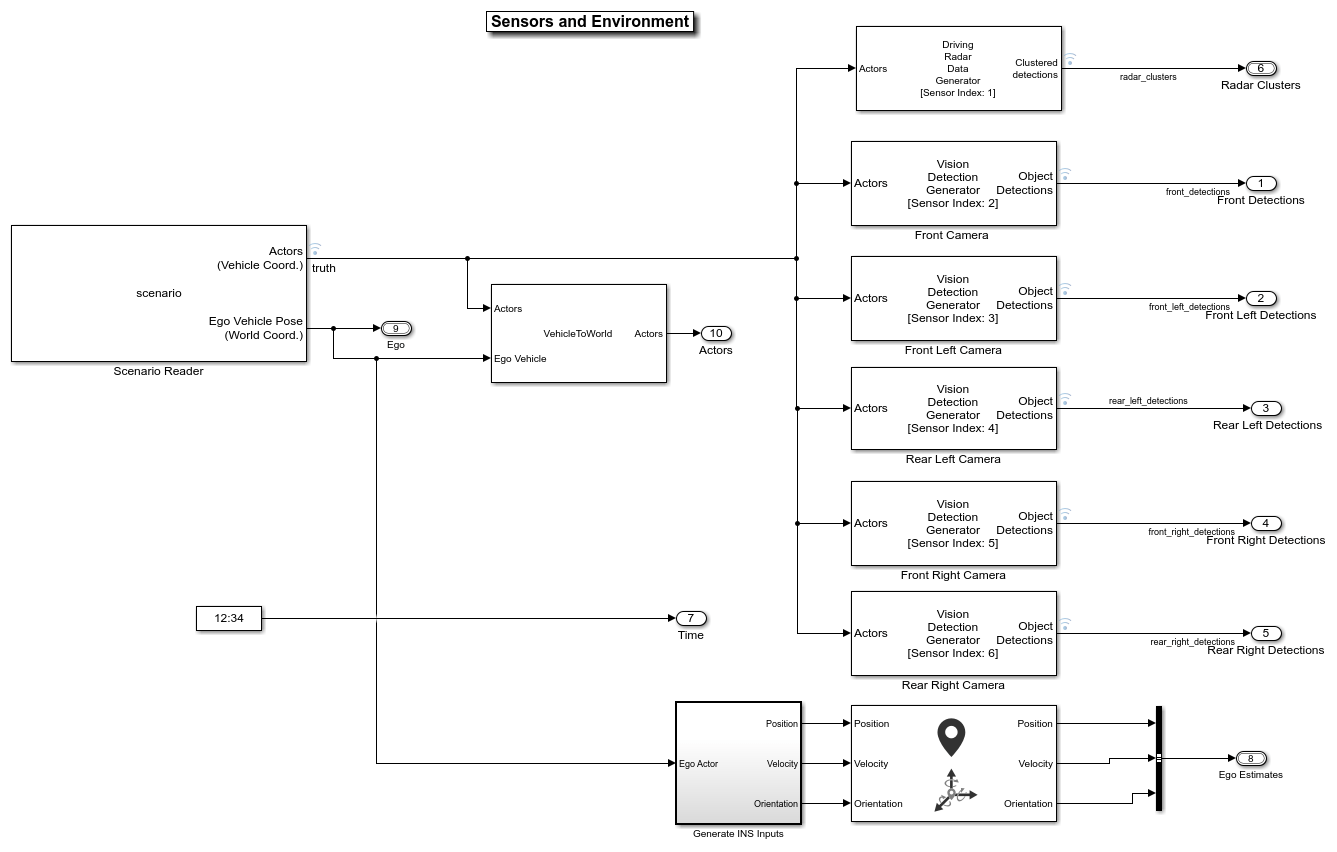

Sensors and Environment — This subsystem specifies the scene, camera, radar, and INS sensors used for simulation.

Surround Vehicle Sensor Fusion — This subsystem fuses the detections from multiple sensors to produce tracks.

Metrics Assessment — This subsystem assesses the surround vehicle sensor fusion design using the GOSPA metric.

Configure Sensors and Environment

The Sensors and Environment subsystem configures the road network, sets vehicle positions, and synthesizes sensors. Open the Sensors and Environment subsystem.

open_system("SurroundVehicleSensorFusionTestBench/Sensors and Environment");

The Scenario Reader block configures the driving scenario and outputs actor poses, which control the positions of the target vehicles.

The Vehicle To World block converts actor poses from the coordinates of the ego vehicle to the world coordinates.

The Vision Detection Generator block simulates object detections using a camera sensor model.

The Driving Radar Data Generator block simulates object detections based on a statistical model. It also outputs clustered object detections for further processing.

The INS block models the measurements from inertial navigation system and global navigation satellite system and outputs the fused measurements. It outputs the noise-corrupted position, velocity, and orientation for the ego vehicle.

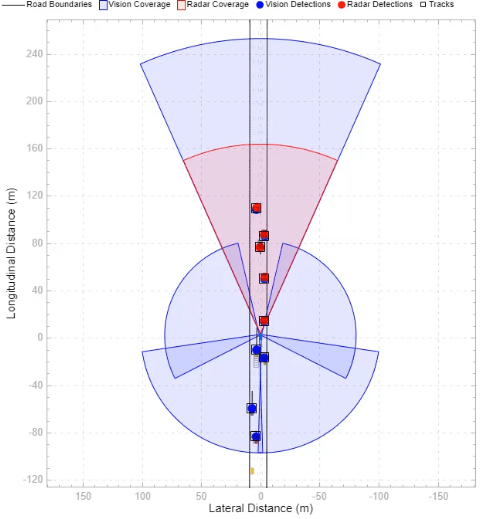

The subsystem configures five vision sensors and a radar sensor to capture the surround view of the vehicle. These sensors are mounted on different locations on the ego vehicle to capture a 360 degree view. The helperSLSurroundVehicleSensorFusionSetup script sets the parameters of the sensor models.

The Bird's-Eye Scope displays sensor coverage by using a cuboid representation. The radar coverage area and detections are in red. The vision coverage area and detections are in blue.

Perform Sensor Fusion and Tracking

The Surround Vehicle Sensor Fusion is the reference model that processes vision and radar detections and generates the position and velocity of the tracks relative to the ego vehicle. Open the Surround Vehicle Sensor Fusion reference model.

open_system("SurroundVehicleSensorFusion");

The Vision Detection Concatenation block concatenates the vision detections. The prediction time is driven by a clock in the Sensors and Environment subsystem.

The Delete Velocity From Vision block is a MATLAB Function block that deletes velocity information from vision detections.

The Vision and Radar Detection Concatenation block concatenates the vision and radar detections.

The Add Localization Information block is a MATLAB Function block that adds localization information for the ego vehicle to the concatenated detections using an estimated ego vehicle pose from INS sensor. This enables the tracker to track in the global frame and minimizes the effect on the tracks of lane change maneuvers by the ego vehicle.

The Joint Probabilistic Data Association Multi Object Tracker (Sensor Fusion and Tracking Toolbox) block performs the fusion and manages the tracks of stationary and moving objects.

The Estimate Yaw block is a MATLAB Function block that estimates the yaw for the tracks and appends it to

Tracksoutput. Yaw information is useful when you integrate this component level model with closed-loop systems like highway lane change system.The Convert To Ego block is a MATLAB Function block that converts the tracks from the global frame to the ego frame using the estimated ego vehicle information. The Bird's-Eye Scope displays tracks in the ego frame.

The Joint Probabilistic Data Association Multi-Object Tracker is a key block in the Surround Vehicle Sensor Fusion reference model. The tracker fuses the information contained in concatenated detections and tracks the objects around the ego vehicle. The tracker then outputs a list of confirmed tracks. These tracks are updated at a prediction time driven by a digital clock in the Sensors and Environment subsystem.

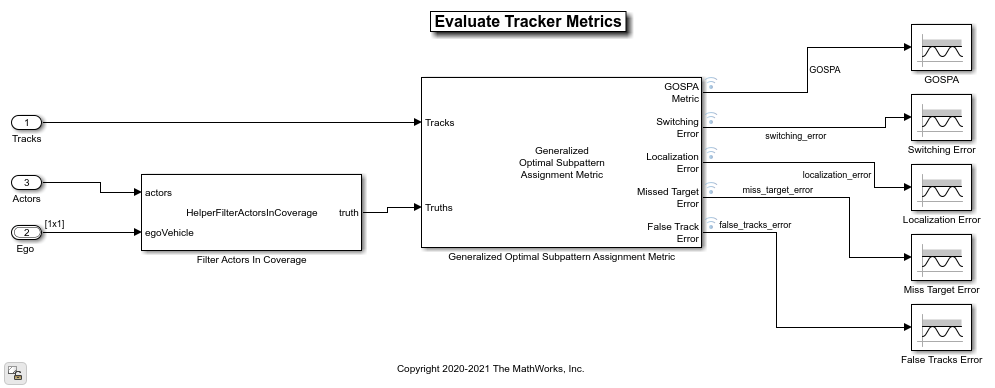

Evaluate Performance of Tracker

The Metrics Assessment subsystem computes various metrics to assess the performance of a tracker. Open the Metrics Assessment subsystem.

This metric assesses the performance of a tracker by combining both assignment and state-estimation accuracy into a single cost value. Open the Metrics Assessment subsystem.

open_system("SurroundVehicleSensorFusionTestBench/Metrics Assessment");

To evaluate the performance of a tracker, you must remove the ground truth information of the actors that are outside the coverage area of the sensors. For this purpose, the subsystem uses the Filter Within Coverage MATLAB Function block to filter only those actors that are within the coverage area of the sensors.

The subsystem contains a GOSPA metric block that computes these metrics:

GOSPA metric — Measures the distance between a set of tracks and their ground truths. This metric combines both assignment and state-estimation accuracy into a single cost value.

Switching error — Indicates the resulting error during track switching. A higher switching error indicates the incorrect assignments of tracks to truth while switching tracks.

Localization error — Indicates the state-estimation accuracy. A higher localization error indicates that the assigned tracks do not estimate the state of the truths correctly.

Missed target error — Indicates the presence of missed targets. A higher missed target error indicates that targets are not being tracked.

False tracks error — Indicates the presence of false tracks.

Simulate Test Bench Model and Analyze Results

During simulation, you can visualize the scenario using the Bird's-Eye Scope. To open the scope, click Bird's-Eye Scope in the Review Results section of the Simulink toolstrip. Next, click Update Signals to find and update signals that the scope can display. Select the tracksInEgo signal for the confirmed tracks.

Configure the SurroundVehicleSensorFusionTestBench model to simulate the scenario_LC_06_DoubleLaneChange scenario. This scenario contains 10 vehicles, including the ego vehicle, and defines their trajectories. In this scenario, the ego vehicle changes lanes two times. The target actors are moving around the ego vehicle.

helperSLSurroundVehicleSensorFusionSetup("scenarioFcnName","scenario_LC_06_DoubleLaneChange");

Simulate the test bench model.

sim("SurroundVehicleSensorFusionTestBench");

Once the simulation starts, use the Bird's-Eye Scope window to visualize the ego actor, target actors, sensor coverages and detections, and confirmed tracks.

During simulation, the model outputs the GOSPA metric and its components, which measure the statistical distance between multiple tracks and truths. The model logs these metrics, with the confirmed tracks and ground truth information, to the base workspace variable logsout. You can plot the values in logsout by using the helperPlotSurroundVehicleSensorFusionResults function.

hFigResults = helperPlotSurroundVehicleSensorFusionResults(logsout);

In this simulation, the Distance Type and Cutoff distance parameters of GOSPA metric block are set to custom and 30 respectively. The helperComputeDistanceToTruth function computes the custom distance by combining the errors in position and velocity between each truth and track.

Close the figure.

close(hFigResults);

Explore Other Scenarios

You can use the procedure in this example to explore these other scenarios, which are compatible with SurroundVehicleSensorFusionTestBench:

scenario_LC_01_SlowMoving

scenario_LC_02_SlowMovingWithPassingCar

scenario_LC_03_DisabledCar

scenario_LC_04_CutInWithBrake

scenario_LC_05_SingleLaneChange

scenario_LC_06_DoubleLaneChange [Default]

scenario_LC_07_RightLaneChange

scenario_LC_08_SlowmovingCar_Curved

scenario_LC_09_CutInWithBrake_Curved

scenario_LC_10_SingleLaneChange_Curved

scenario_LC_11_MergingCar_HighwayEntry

scenario_LC_12_CutInCar_HighwayEntry

scenario_LC_13_DisabledCar_Ushape

scenario_LC_14_DoubleLaneChange_Ushape

scenario_LC_15_StopnGo_Curved

scenario_SVSF_01_ConstVelocityAsTargets

scenario_SVSF_02_CrossTargetActors

Use these additional scenarios to analyze SurroundVehicleSensorFusionTestBench under different conditions.

Conclusion

This example showed how to simulate and evaluate the performance of the surround vehicle sensor fusion and tracking component for highway lane change maneuvers. This component-level model lets you stress test your design in an open-loop virtual environment and helps in tuning the tracker parameters by evaluating GOSPA metrics. The next logical step is to integrate this component-level model in a closed-loop system like highway lane change.

See Also

Scenario Reader | Vehicle To World | Vision Detection Generator | Driving Radar Data Generator