Developing High-Integrity Aircraft Approach Systems in Accordance with DO-178B Using Model-Based Design

By Jan D'Espallier, Septentrio

When visibility is poor, pilots often rely on ground-based instrument landing systems (ILS) to land their aircraft. Global navigation satellite system (GNSS) and satellite-based augmentation system (SBAS) technologies provide a reliable, accurate, and cost-effective alternative in places where no ILS is available. GNSS-based landing systems often increase runway capacity because, unlike ILS, they do not require a long, straight line of descent.

Septentrio's AiRx2 OEM is a GNSS+SBAS receiver for precision aviation applications. In addition to supporting runway approach with localizer performance with vertical guidance (LPV) and en route navigation, AiRx2 can be used for aircraft surveillance applications.

As a high-integrity aircraft system, AiRx2 must meet the stringent development and verification requirements of the DO-178B standard. Model-Based Design enables us to streamline the certification process by tracking requirements, verifying the design using simulation, and maintaining the system model as the single source of truth throughout development.

We rapidly prototype design alternatives and evaluate them in simulation without waiting for a programmer to code them. This approach enables us not only to find the best design but also to verify the design much earlier in development. Because we have verified our models via simulation, the resulting generated code is virtually bug-free.

DO-178 Workflows and Requirements

DO-178B, Software Considerations in Airborne Systems and Equipment Certification, is the current version of the DO-178 international safety standard used to certify high-integrity commercial avionic system software, such as the software in Septentrio’s AiRx2. Published by RTCA, DO-178B outlines considerations that organizations may use to secure FAA approval of the software they develop.

The processes described in DO-178B cover planning, development, verification, configuration management, and quality assurance for software systems development. Organizations are free to map DO-178B procedures to automatic code generation using Simulink® and Embedded Coder®.

In developing the AiRx2 software, Septentrio followed a well-defined workflow for using Simulink system models and Model-Based Design to build high-integrity systems that satisfy DO-178B.We used DO Qualification Kit to help prepare tool qualification plans for certification authorities and document tool operational requirements. DO Qualification Kit supports the DO-178B certification process with resources for qualifying MathWorks software verification tools, including Simulink Verification and Validation™ (transitioned at R2017b) and Polyspace® code verifiers.

Editor’s Note: An update to DO-178C was recently published by RTCA, but at the time of writing, its use has not been approved by the FAA. DO-178C provides guidance on the use of Model-Based Design, such as how to map DO-178 objectives to model and code generation activities, through a supplemental document, DO-331: Model-Based Development and Verification Supplement to DO-178C and DO-278A.

Selecting a Design Environment and Workflow

MATLAB® is used extensively at Septentrio, particularly by the research department. Selecting Simulink and Embedded Coder for AiRx2 development puts us on a path to closer collaboration between the research and development groups.

In addition to Simulink, our engineers evaluated a modeling environment designed specifically for safety-critical systems. We found that this environment did not offer the flexibility we needed. It lacked the simulation capabilities of Simulink, including the ability to generate inputs for test scenarios. While it could generate certified code, it would have severely limited the number of blocks that we could use. We would have had to build our own libraries for matrix operations and many other functions essential to the navigation component of AiRx2.

We needed a way to develop designs easily, generate test inputs, and assess options through simulation. Model-Based Design with MATLAB and Simulink supports the full development workflow, including tracing system requirements, architecting the two main system components, verifying the system design, generating the source code, and verifying the source code.

Capturing and Tracing System Requirements

The high-level system requirements for AiRx2 come in two parts. The first comprises the user or sales requirements, which we capture in a Word document. The second comprises requirements from RTCA that define minimum operational performance standards (MOPS) for airborne global positioning system (GPS) equipment, described in a detailed, 250-page document. We import both sets of requirements into the DOORS® requirements management system. We link the system requirements to the high-level software requirements using DOORS. The high-level software requirements, in turn, are directly linked to the low-level software requirements modeled in Simulink.

Architecting the System

We have organized our top-level model into manageable levels of hierarchy by using model referencing and incorporating several submodels or modules (Figure 1). These submodels include the main measurement module, which calculates the distances to satellites based on the signals it receives, and the navigation module, which translates the measured distances into a position. This architecture enables individual engineers to work independently on different aspects of the design. All models and requirements documents are under version control using Apache Subversion (SVN), which also facilitates team collaboration.

Figure 1. Simulink model of part of the AiRx2 system.

In partitioning the model, we divided the overall design along boundaries that make each module easy to test. We wanted to reuse the test cases that we use to verify the design in Simulink on the generated C source code. By comparing the test results from the simulation with the test results from the code, we will be able to verify the software against the model. Our careful partitioning of the model will make test case reuse straightforward.

As we develop the models, we use the Model Advisor in Simulink Verification and Validation to check our model for compliance with modeling standards, including guidelines from the MathWorks Automotive Advisory Board (MAAB) and guidelines that we developed in conjunction with MonkeyProof Solutions to facilitate efficient code generation.

Verifying the System Design

After modeling and simulating the system design in Simulink, we will work with a third-party partner to verify the design against requirements before implementing it in software. While relying on a third party for verification is not required by DO-178B, it is a good practice, because independent verification is a requirement.

We will provide our partner with our DOORS database and our Simulink models. They will use Simulink Verification and Validation to verify the models, and run Model Advisor checks against the model for certification credit. They will create and run test cases, which are themselves executable models, to help ensure full functional coverage of low-level requirements. Model coverage analysis is performed to satisfy the certification requirement that the low-level requirements be verifiable. When reused on the generated code, test cases that maximize model coverage also help maximize code coverage.

Generating and Verifying the Source Code

With Embedded Coder, we generate source C code from our models for the Deos real-time operating system (Lev A RTOS) from DDC-I. Engineers from MonkeyProof Solutions have suggested modeling changes that optimize the performance of the generated code.

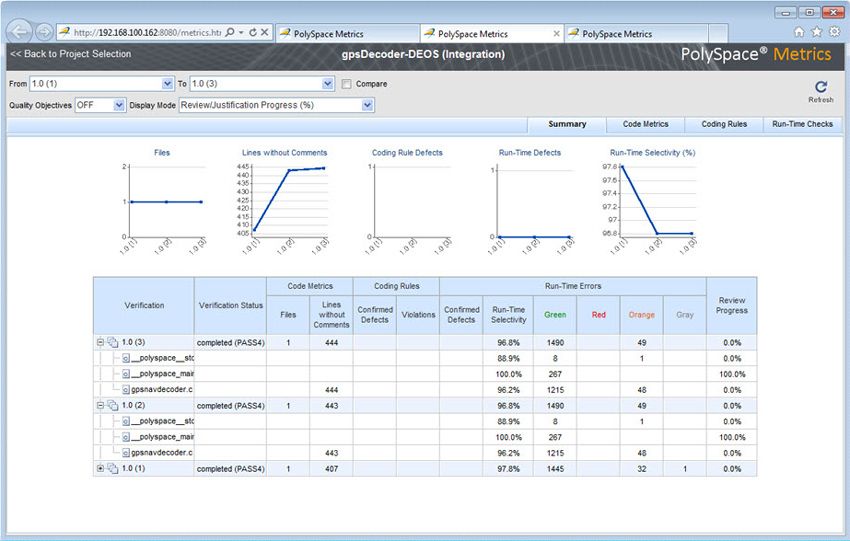

Embedded Coder automatically inserts comments that trace each line of the generated code back to the model and to the requirements in DOORS. We conduct manual code reviews using these comments. We also run automated verification checks for run-time errors, unreachable code, uninitialized variables, and compliance problems using Polyspace code verifiers (Figure 2). The error reports mark code that is proven to be free of run-time errors, enabling us to focus code reviews on other parts of the code.

Figure 2. Summary of results for GPS decoder code.

We reuse test cases applied to verify the low-level requirements. As with the system C code, we generate test code from the test case models with Embedded Coder. These tests are then run against the generated system code running on the RTOS. Additional low-level test cases complement the generated test cases.

We analyze the code coverage of the tests, including statement coverage, decision coverage, condition coverage, and cyclomatic complexity. Both statement and decision coverage are required for DO-178B level B certification.

Our plans for software certification and tool usage have been approved by the FAA, including the Plan for Software Aspects of Certification (PSAC), which details our use of Model-Based Design.

Published 2012 - 92004v00