quantilePredict

Predict response quantile using bag of regression trees

Syntax

Description

YFit = quantilePredict(Mdl,X)X, a table or matrix of predictor data, and using the bag of regression trees Mdl. Mdl must be a TreeBagger model object.

YFit = quantilePredict(Mdl,X,Name,Value)Name,Value pair arguments. For example, specify quantile probabilities or which trees to include for quantile estimation.

[ also returns a sparse matrix of response weights.YFit,YW]

= quantilePredict(___)

Input Arguments

Name-Value Arguments

Output Arguments

Examples

More About

Tips

quantilePredict estimates the conditional distribution of the response using the training data every time you call it. To predict many quantiles efficiently, or quantiles for many observations efficiently, you should pass X as a matrix or table of observations and specify all quantiles in a vector using the Quantile name-value pair argument. That is, avoid calling quantilePredict within a loop.

Algorithms

The

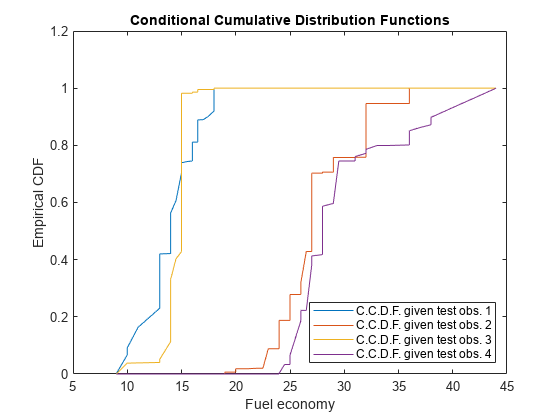

TreeBaggergrows a random forest of regression trees using the training data. Then, to implement quantile random forest,quantilePredictpredicts quantiles using the empirical conditional distribution of the response given an observation from the predictor variables. To obtain the empirical conditional distribution of the response:quantilePredictpasses all the training observations inMdl.Xthrough all the trees in the ensemble, and stores the leaf nodes of which the training observations are members.quantilePredictsimilarly passes each observation inXthrough all the trees in the ensemble.For each observation in

X,quantilePredict:Estimates the conditional distribution of the response by computing response weights for each tree.

For observation k in

X, aggregates the conditional distributions for the entire ensemble:n is the number of training observations (

size(Y,1)) and T is the number of trees in the ensemble (Mdl.NumTrees).

For observation k in

X, the τ quantile or, equivalently, the 100τ% percentile, is

This process describes how

quantilePredictuses all specified weights.For all training observations j = 1,...,n and all chosen trees t = 1,...,T,

quantilePredictattributes the product vtj = btjwj,obs to training observation j (stored inMdl.X(andj,:)Mdl.Y(). btj is the number of times observation j is in the bootstrap sample for tree t. wj,obs is the observation weight inj)Mdl.W(.j)For each chosen tree,

quantilePredictidentifies the leaves in which each training observation falls. Let St(xj) be the set of all observations contained in the leaf of tree t of which observation j is a member.For each chosen tree,

quantilePredictnormalizes all weights within a particular leaf to sum to 1, that is,For each training observation and tree,

quantilePredictincorporates tree weights (wt,tree) specified byTreeWeights, that is, w*tj,tree = wt,treevtj*Trees not chosen for prediction have 0 weight.For all test observations k = 1,...,K in

Xand all chosen trees t = 1,...,TquantilePredictpredicts the unique leaves in which the observations fall, and then identifies all training observations within the predicted leaves.quantilePredictattributes the weight utj such thatquantilePredictsums the weights over all chosen trees, that is,quantilePredictcreates response weights by normalizing the weights so that they sum to 1, that is,

References

[1] Breiman, L. "Random Forests." Machine Learning 45, pp. 5–32, 2001.

[2] Meinshausen, N. “Quantile Regression Forests.” Journal of Machine Learning Research, Vol. 7, 2006, pp. 983–999.

Version History

Introduced in R2016b