You are now following this Submission

- You will see updates in your followed content feed

- You may receive emails, depending on your communication preferences

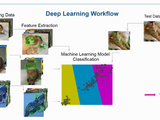

These examples go through the 3 demos explained in the "Object Recognition: Deep Learning and Machine Learning for Computer Vision" Webinar

The demos are as follows:

- BagOfFeatures for scene classification

- Transfer Learning - a Deep Learning approach

- Deep Learning as a Feature Extractor

The webinar can be viewed here: https://www.mathworks.com/videos/object-recognition-deep-learning-and-machine-learning-for-computer-vision-121144.html

Cite As

Johanna Pingel (2026). Demos from "Object Recognition: Deep Learning" Webinar (https://nl.mathworks.com/matlabcentral/fileexchange/58320-demos-from-object-recognition-deep-learning-webinar), MATLAB Central File Exchange. Retrieved .

General Information

- Version 1.0.0.1 (12.2 KB)

MATLAB Release Compatibility

- Compatible with any release

Platform Compatibility

- Windows

- macOS

- Linux