Train Ensemble Classifiers Using Classification Learner App

This example shows how to construct ensembles of classifiers in the Classification Learner app. Ensemble classifiers meld results from many weak learners into one high-quality ensemble predictor. Qualities depend on the choice of algorithm, but ensemble classifiers tend to be slow to fit because they often need many learners.

In MATLAB®, load the

fisheririsdata set and define some variables from the data set to use for a classification.fishertable = readtable("fisheriris.csv");On the Apps tab, in the Machine Learning and Deep Learning group, click Classification Learner.

On the Learn tab, in the File section, click New Session > From Workspace.

In the New Session from Workspace dialog box, select the table

fishertablefrom the Data Set Variable list (if necessary). Observe that the app has selected response and predictor variables based on their data type. Petal and sepal length and width are predictors. Species is the response that you want to classify. For this example, do not change the selections.Click Start Session.

Classification Learner creates a scatter plot of the data.

Use the scatter plot to investigate which variables are useful for predicting the response. Select different variables in the X- and Y-axis controls to visualize the distribution of species and measurements. Observe which variables separate the species colors most clearly.

Train a selection of ensemble models. On the Learn tab, in the Models section, click the arrow to expand the list of classifiers, and under Ensemble Classifiers, click All Ensembles. Then, in the Train section, click Train All and select Train All.

Note

If you have Parallel Computing Toolbox™, then the Use Parallel button is selected by default. After you click Train All and select Train All or Train Selected, the app opens a parallel pool of workers. During this time, you cannot interact with the software. After the pool opens, you can continue to interact with the app while models train in parallel.

If you do not have Parallel Computing Toolbox, then the Use Background Training check box in the Train All menu is selected by default. After you select an option to train models, the app opens a background pool. After the pool opens, you can continue to interact with the app while models train in the background.

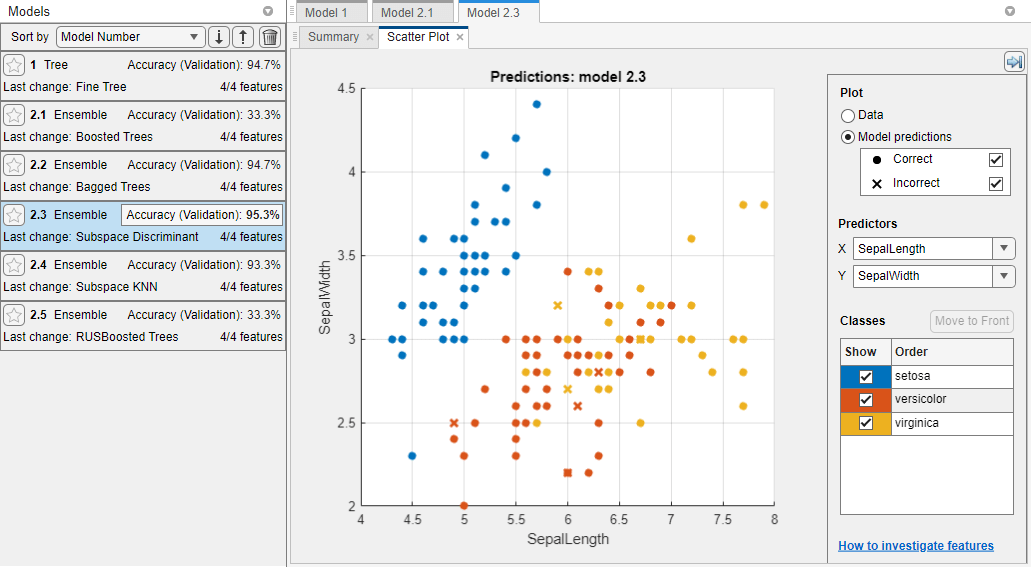

Classification Learner trains one of each ensemble classification option in the gallery, as well as the default fine tree model. In the Models pane, the app outlines in a box the Accuracy (Validation) score of the best model. Classification Learner also displays a validation confusion matrix for the first ensemble model (Boosted Trees).

Select a model in the Models pane to view the results. For example, select the Subspace Discriminant model (model 2.3). Inspect the model Summary tab, which displays the Training Results and Additional Training Results metrics, calculated on the validation set.

Examine the scatter plot for the trained model. On the Learn tab, in the Plots and Results section, click the arrow to open the gallery, and then click Scatter in the Validation Results group. Misclassified points are shown as an X.

Note

Validation introduces some randomness into the results. Your model validation results can vary from the results shown in this example.

Inspect the accuracy of the predictions in each class. On the Learn tab, in the Plots and Results section, click the arrow to open the gallery, and then click Confusion Matrix (Validation) in the Validation Results group. View the matrix of true class and predicted class results.

For each remaining model, select the model in the Models pane, open the validation confusion matrix, and then compare the results across the models.

Choose the best model (the best score is highlighted in the Accuracy (Validation) box). To improve the model, try including different features in the model. See if you can improve the model by removing features with low predictive power.

First, duplicate the model by right-clicking the model and selecting Duplicate.

Investigate features to include or exclude using one of these methods.

Use the parallel coordinates plot. On the Learn tab, in the Plots and Results section, click the arrow to open the gallery, and then click Parallel Coordinates in the Validation Results group. Keep predictors that separate classes well.

In the model Summary tab, you can specify the predictors to use during training. Click Feature Selection to expand the section, and specify predictors to remove from the model.

Use a feature ranking algorithm. On the Learn tab, in the Options section, click Feature Selection. In the Default Feature Selection tab, specify the feature ranking algorithm you want to use, and the number of features to keep among the highest ranked features. The bar graph can help you decide how many features to use.

Click Save and Apply to save your changes. The new feature selection is applied to the existing draft model in the Models pane and will be applied to new draft models that you create using the gallery in the Models section of the Learn tab.

Train the model. On the Learn tab, in the Train section, click Train All and select Train Selected to train the model using the new options. Compare results among the classifiers in the Models pane.

Choose the best model in the Models pane. To try to improve the model further, try changing its hyperparameters. First, duplicate the model by right-clicking the model and selecting Duplicate. Then, try changing a hyperparameter setting in the model Summary tab. Train the new model by clicking Train All and selecting Train Selected in the Train section.

For information on the settings to try and the strengths of different ensemble model types, see Ensemble Classifiers.

You can export a full or compact version of the trained model to the workspace. On the Learn tab, in the Export section, click Export Model and select Export Model. To exclude the training data and export a compact model, clear the check box in the Export Classification Model dialog box. You can still use the compact model for making predictions on new data. In the dialog box, click OK to accept the default variable name.

To examine the code for training this classifier, click Generate Function.

Use the same workflow to evaluate and compare the other classifier types you can train in Classification Learner.

To try all the nonoptimizable classifier model presets available for your data set:

On the Learn tab, in the Models section, click the arrow to open the gallery of classification models.

In the Get Started group, click All.

In the Train section, click Train All and select Train All.

To learn about other classifier types, see Train Classification Models in Classification Learner App.

Related Topics

- Train Classification Models in Classification Learner App

- Select Data for Classification or Open Saved App Session

- Choose Classifier Options

- Feature Selection and Feature Transformation Using Classification Learner App

- Visualize and Assess Classifier Performance in Classification Learner

- Export Classification Model to Predict New Data

- Train Decision Trees Using Classification Learner App