Use Complete Poll in Pattern Search

The patternsearch solver attempts to find a minimum by evaluating the objective function at a pattern of points. The solver can evaluate all the points in the pattern, called a complete poll. Or, to try to save time, patternsearch can stop the evaluations as soon as it locates a point with a better objective function value. This example shows some of the effects of using a complete poll.

Consider the following objective function.

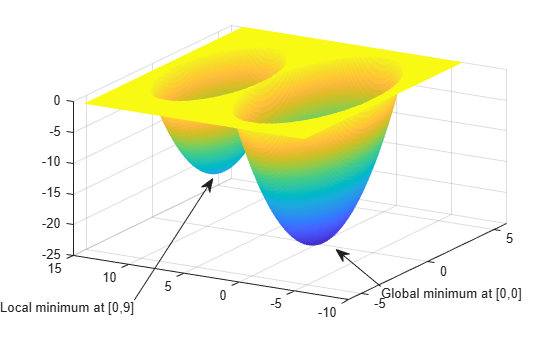

The global minimum of the function occurs at (0, 0), where its value is –25. However, the function also has a local minimum at (0, 9), where its value is –16.

This code creates the function.

function z = poll_example(x) if x(1)^2 + x(2)^2 <= 25 z = x(1)^2 + x(2)^2 - 25; elseif x(1)^2 + (x(2) - 9)^2 <= 16 z = x(1)^2 + (x(2) - 9)^2 - 16; else z = 0; end end

Plot the function.

[X,Y] = meshgrid(-6:.1:6,-6:.1:14); Z = zeros(size(X)); for i = 1:size(X,1) for j=1:size(X,2) Z(i,j) = poll_example([X(i,j),Y(i,j)]); end end surf(X,Y,Z,LineStyle="none"); view([-60,30]) annotation("textarrow",[0.24 0.38],... [0.11 0.47],String="Local minimum at [0,9]"); annotation("textarrow",[0.68 0.6],... [0.15 0.26],String="Global minimum at [0,0]");

To search for the minimum, run a pattern search on the function. To have patternsearch use he standard mesh, set the ScaleMesh option to false.

options = optimoptions("patternsearch",Display="iter",... ScaleMesh=false); [x,fval] = patternsearch(@poll_example,[0,5],... [],[],[],[],[],[],[],options)

Iter Func-count f(x) MeshSize Method

0 1 0 1

1 3 -7 2 Successful Poll

2 5 -15 4 Successful Poll

3 9 -15 2 Refine Mesh

4 13 -15 1 Refine Mesh

5 15 -16 2 Successful Poll

6 19 -16 1 Refine Mesh

7 23 -16 0.5 Refine Mesh

8 27 -16 0.25 Refine Mesh

9 31 -16 0.125 Refine Mesh

10 35 -16 0.0625 Refine Mesh

11 39 -16 0.03125 Refine Mesh

12 43 -16 0.01562 Refine Mesh

13 47 -16 0.007812 Refine Mesh

14 51 -16 0.003906 Refine Mesh

15 55 -16 0.001953 Refine Mesh

16 59 -16 0.0009766 Refine Mesh

17 63 -16 0.0004883 Refine Mesh

18 67 -16 0.0002441 Refine Mesh

19 71 -16 0.0001221 Refine Mesh

20 75 -16 6.104e-05 Refine Mesh

21 79 -16 3.052e-05 Refine Mesh

22 83 -16 1.526e-05 Refine Mesh

23 87 -16 7.629e-06 Refine Mesh

24 91 -16 3.815e-06 Refine Mesh

25 95 -16 1.907e-06 Refine Mesh

26 99 -16 9.537e-07 Refine Mesh

patternsearch stopped because the mesh size was less than options.MeshTolerance.

x = 1×2

0 9

fval = -16

To understand what patternsearch does in its poll, evaluate the algorithmic steps. The algorithm begins by evaluating the function at the initial point, . The poll evaluates the following during its first iterations.

As soon as the search polls the mesh point (0, 6), at which the objective function value is less than at the initial point, it stops polling the current mesh and sets the current point at the next iteration to (0, 6). Consequently, the search moves toward the local minimum at (0, 9) at the first iteration. You see this by looking at the first two lines of the command line display.

Iter Func-count f(x) MeshSize Method

0 1 0 1

1 3 -7 2 Successful Poll

Note that the pattern search performs only two evaluations of the objective function at the first iteration, increasing the total function count from 1 to 3.

Next, set the UseCompletePoll option to true and rerun the optimization.

options.UseCompletePoll = true;

[x,fval] = patternsearch(@poll_example,[0,5],...

[],[],[],[],[],[],[],options)Iter Func-count f(x) MeshSize Method

0 1 0 1

1 5 -9 2 Successful Poll

2 9 -21 4 Successful Poll

3 13 -21 2 Refine Mesh

4 17 -25 4 Successful Poll

5 21 -25 2 Refine Mesh

6 25 -25 1 Refine Mesh

7 29 -25 0.5 Refine Mesh

8 33 -25 0.25 Refine Mesh

9 37 -25 0.125 Refine Mesh

10 41 -25 0.0625 Refine Mesh

11 45 -25 0.03125 Refine Mesh

12 49 -25 0.01562 Refine Mesh

13 53 -25 0.007812 Refine Mesh

14 57 -25 0.003906 Refine Mesh

15 61 -25 0.001953 Refine Mesh

16 65 -25 0.0009766 Refine Mesh

17 69 -25 0.0004883 Refine Mesh

18 73 -25 0.0002441 Refine Mesh

19 77 -25 0.0001221 Refine Mesh

20 81 -25 6.104e-05 Refine Mesh

21 85 -25 3.052e-05 Refine Mesh

22 89 -25 1.526e-05 Refine Mesh

23 93 -25 7.629e-06 Refine Mesh

24 97 -25 3.815e-06 Refine Mesh

25 101 -25 1.907e-06 Refine Mesh

26 105 -25 9.537e-07 Refine Mesh

patternsearch stopped because the mesh size was less than options.MeshTolerance.

x = 1×2

0 0

fval = -25

This time, the pattern search finds the global minimum at (0, 0). The difference between this run and the previous one is that with UseCompletePoll set to true, at the first iteration the pattern search polls all four mesh points.

Because the last mesh point has the lowest objective function value, the pattern search selects it as the current point at the next iteration. The first two lines of the command-line display show this.

Iter Func-count f(x) MeshSize Method

0 1 0 1

1 5 -9 2 Successful Poll

In this case, the objective function is evaluated four times at the first iteration. As a result, the pattern search moves toward the global minimum at (0, 0).

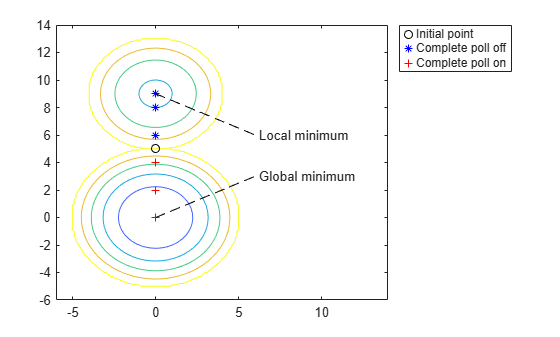

The following figure compares the sequence of points returned when Complete poll is set to Off with the sequence when Complete poll is On. The code for the function appears after the figure.

graph_poll_example

function graph_poll_example ff = []; tt = []; [X,Y] = meshgrid(-6:.1:14,-6:.1:14); Z = zeros(size(X)); for i = 1:size(X,1) for j=1:size(X,2) Z(i,j) = poll_example([X(i,j),Y(i,j)]); end end contour(X,Y,Z); hold on h = plot(0,5,"ko"); opts = optimoptions("patternsearch", ... OutputFcn=@plotpnt, ... UseCompletePoll=false, ... Display="off",... ScaleMesh=false); [thex,thefv] = patternsearch(@poll_example,[0,5],... [],[],[],[],[],[],[],opts); opts.UseCompletePoll=true; [thex,thefv] = patternsearch(@poll_example,[0,5],... [],[],[],[],[],[],[],opts); hold off line([0,6],[9,6],Color="k",LineStyle="--") line([0,6],[0,3],Color="k",LineStyle="--") text(6.2,6,"Local minimum") text(6.2,3,"Global minimum") legend([h,ff,tt],"Initial point","Complete poll off","Complete poll on") function [stop,opts,optchanged] = plotpnt(optimvalues,opts,flag) if strcmp(flag,"iter") if opts.UseCompletePoll == true tt = plot(optimvalues.x(1),optimvalues.x(2),"r+"); else ff = plot(optimvalues.x(1),optimvalues.x(2),"b*"); end end stop = false; optchanged = false; end end